Google search engine results pages (SERPs) can provide a lot of important data for you and your business but you most likely wouldn't want to scrape it manually. After all, there might be multiple queries you're interested in, and the corresponding results should be monitored on a regular basis. This is where automated scraping comes into play: you write a script that processes the results for you or use a dedicated tool to do all the heavy lifting.

In this article you'll learn how to scrape Google search results with Python. We will discuss three main approaches:

- Using the Scrapingbee API to simplify the process and overcome anti-bot hurdles (hassle-free)

- Using a graphical interface to construct a scraping request (that is, without any coding)

- Writing a custom script to do the job

We will see multiple code samples to help you get started as fast as possible.

Shall we get started?

You can find the source code for this tutorial on GitHub.

Why scrape search results?

The first question that might arise is "why in the world do I need to scrape anything?". That's a fair question, actually.

- You're an SEO tool provider and need to track positions for billions of keywords.

- You're a website owner and want to check your rankings for a list of keywords regularly.

- You want to perform competitor analysis. The simplest thing to do is to understand how your website ranks versus that other guy's website: in other words, you'll want to assess your competitor's positions for various keywords.

- It can also be important to understand what customers are into these days. What are they searching for? What are the modern trends? For this kind of search-interest and topic trend analysis, you can use our Google Trends API to pull time-series demand data and combine it with your scraped SERP results.

- If you're a content creator, analyzing potential topics to cover is key. What would your audience like to read about?

- You may also need to perform lead generation, monitor certain news, prices, or research and analyze a given field.

In fact, as you can see, there are many reasons to scrape the search results. But while we understand "why", the more important question is "how" which is closely tied to "what are the potential issues". Let's talk about that.

Challenges of scraping Google search results

Unfortunately, scraping Google search results is not as straightforward as one might think. Here are some typical issues you'll probably encounter:

Aren't you a robot, by chance?

I'm pretty sure I'm not a robot (mostly) but for some reason Google keeps asking me this question for years now. It seems he's never satisfied with my answer. If you've seen those nasty "I'm not a robot" checkboxes also known as "captcha" you know what I mean.

So-called "real humans" can pass these checks fairly easily but if we are talking about scraping scripts, things become much harder. Yes, you can think of a way to solve captchas but this is definitely not a trivial task. Moreover, if you fail the check multiple times your IP address might get blocked for a few hours which is even worse. Luckily, there's a way to overcome this problem as we'll see next.

Do you want some cookies?

If you open Google search home page via your browser's incognito mode, chances are you're going to see a "consent" page asking whether you are willing to accept some cookies (no milk though). Until you click one of the buttons it won't be possible to perform any searches. As you can guess, the same thing might happen when running your scraping script. Actually, we will discuss this problem later in this article.

Don't request so much from me!

Another problem happens when you request too much data from Google, and it becomes really angry with you. It might happen when your script sends too many requests too fast, and consequently the service blocks you for a period of time. The simplest solution is to wait, or to use multiple IP addresses, or to limit the number of requests, or... perhaps there's some other way? We're going to find out soon enough!

Lost in data

Even if you manage to actually get some reasonable response from Google, don't celebrate yet. Problem is, the returned HTML data contains lots and lots of stuff that you are not really interested in. There are all kinds of scripts, headers, footers, extra markup, and so on and so forth. Your job is to try and fetch the relevant information from all this gibberish but it might appear to be a relatively complex task on its own.

Problem is, Google tends to use not-so-meaningful tag IDs due to certain reasons, therefore you can't even create reliable rules to search the content on the page. I mean, yesterday the necessary tag ID was yhKl7D (whatever that means) but today it's klO98bn. Go figure.

Using an API vs doing it yourself

So, what options do we have at the end of the day? You can craft your very own solution to scrape Google, or you can take advantage of a third-party API to do all the heavy lifting.

The benefits of writing a custom solution are obvious. You are in full control, you 100% understand how this solution works (well, I hope), you can make any enhancements to it as needed, plus the maintenance is like zero bucks.

What are the downsides? For one thing, crafting a proper solution is hard due to the issues listed above. To be honest, I'd rather re-read "War and Peace" (I never liked this novel in school, sorry Mr. Tolstoy) than agree to write such a script. Also, Google might introduce some new obscure stuff that would make your script turn into potato until you understand what's wrong. Also, you'll probably still need to purchase some servers/proxies to overcome rate limitations and other related problems.

So, let's suppose for a second that you are not an adventuring type and don't want to solve all the problems by yourself. In this case I'm happy to present you another way: use our Google Search Results Scraper API that can alleviate 95% of the problems. You don't need to think about how to fetch the data anymore. Rather, you can concentrate on how to use and analyze this data — after all, I'm pretty sure that's your end goal. And if you also need to collect visual assets from SERPs, such as product photos or thumbnails, you can complement it with our Google Image Scraper API to automatically extract images from Google.

Yes, a third-party API comes with a cost but frankly speaking it's worth every penny because it will save you time and hassle. You don't need to think about those annoying captchas, rate limitations, IP bans, cookie consents, and even about HTML parsing. It goes like this: you send a single request and only the relevant data without any extra stuff is delivered straight to your room in a nicely formatted JSON rather than ugly HTML (but you can download the full HTML if you really want). Sounds too good to be true? Isn't it a dream? Welcome to the real world, Mr. Anderson.

How to scrape Google search results with Scrapingbee

Without any further ado, I'm going to explain how to work with the ScrapingBee API to easily scrape Google search results of various types. I'm assuming that you're familiar with Python (no need to be a smooth pro), and you have Python 3 installed on your PC.

If you are not really a developer and have no idea what Python is, don't worry. Please skip to the "Scraping Google search results without coding" section and continue reading.

Quickstart

Okay, so let's get down to business!

To get started, grab your free ScrapingBee trial. No credit card is required, and you'll get 1000 credits.

After logging in, you'll be navigated to the dashboard. Copy your API token as we'll need it to send the requests:

- Next, create your Python project. It can be a regular

.pyscript but I'm going to use a dependency management and packaging tool called uv to create a project skeleton:

uv init google_scraper

cd google_scraper

- We'll need a library to send HTTP requests, so make sure to install Requests. Plus, let's also add a package to read

.envfiles:

uv add requests python-dotenv

- Create a new

.envfile in the project root and store your API key inside. Make sure to add this file to.gitignore.

SCRAPINGBEE_API_KEY=your_api_key_here

- Create a new Python file. For example, I'll create a new

google_scraperdirectory in the project root and add ascraping_bee_classic.pyfile inside. Let's suppose that we would like to scrape results for the "pizza new-york" query. Here's the boilerplate code:

import os

import requests

from dotenv import load_dotenv

from typing import Any

API_URL: str = "https://app.scrapingbee.com/api/v1/google"

def fetch_google_results(api_key: str, query: str) -> requests.Response:

response = requests.get(

url=API_URL,

params={

"api_key": api_key,

"search": query,

},

timeout=10,

)

response.raise_for_status()

return response

def get_api_key() -> str:

load_dotenv()

api_key = os.getenv("SCRAPINGBEE_API_KEY")

if not api_key:

raise ValueError("SCRAPINGBEE_API_KEY not found in .env file")

return api_key

if __name__ == "__main__":

api_key = get_api_key()

query = "pizza new-york"

response = fetch_google_results(api_key, query)

print("Response HTTP Status Code:", response.status_code)

print("Response HTTP Response Body:", response.text)

So, here we're using ScrapingBee Google endpoint to perform scraping. Make sure to provide your api_key from the second step, and adjust the search param as needed to the keyword you want to scrape SERPs for.

You can run the script with:

uv run python google_scraper\scraping_bee_classic.py

By default the API will return results in English but you can adjust the language param to override this behavior.

The response.content will contain all the data in JSON format. However, it's important to understand what exactly this data contains therefore let's briefly talk about that.

Interpreting scraped data

So, the response.content will return JSON data and you can find the sample response in the official docs. Let's cover some important fields:

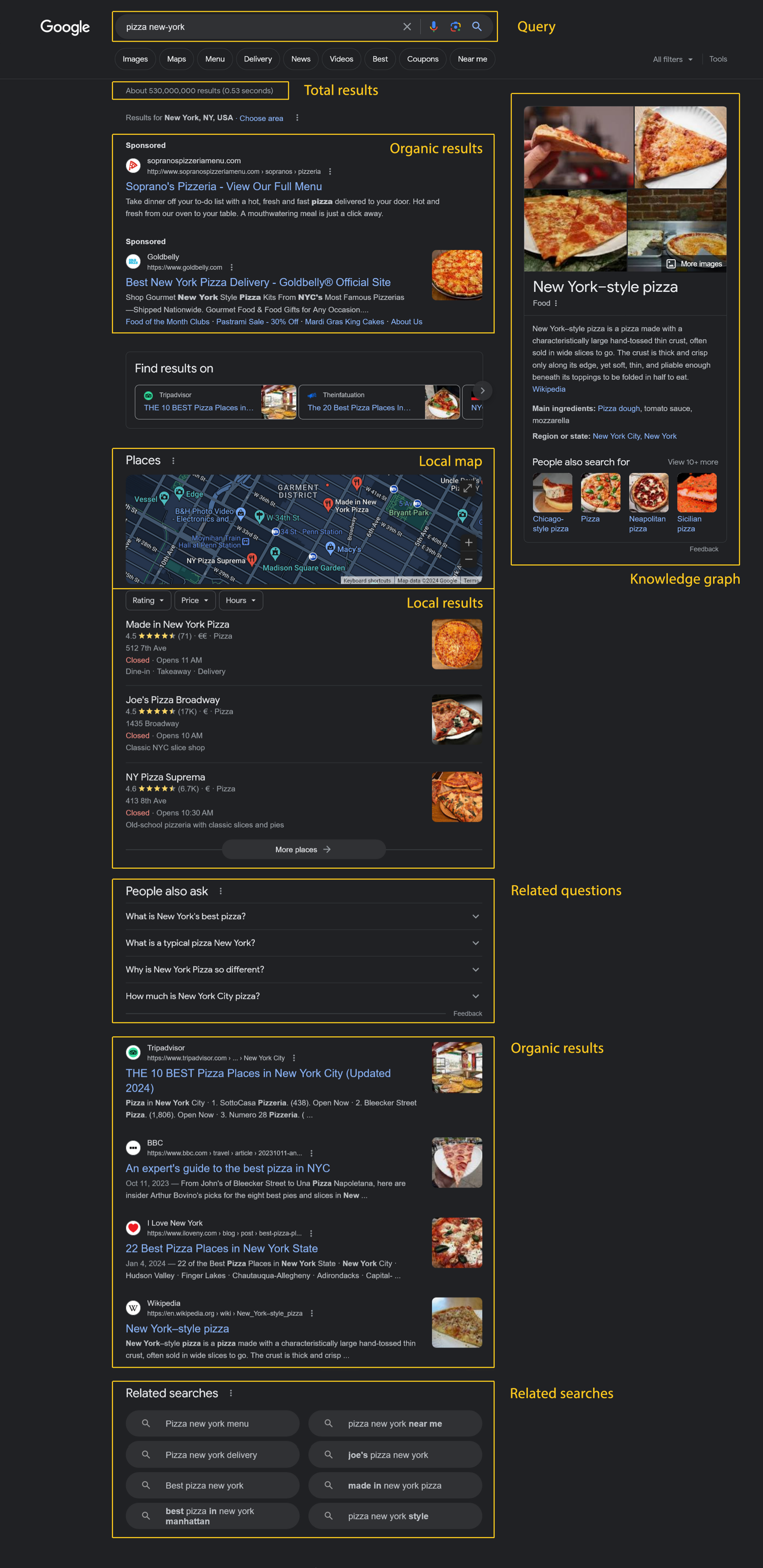

meta_data— your search query in the form ofhttps://www.google.com/search?q=..., number of results, number of organic results.organic_results— these are the actual "search results" as most people see them. For every result there's aurlfield as well asdescription,position,domain.local_results— results based on the chosen location. For example, in our case we'll see some top pizzerias in New York. Every item containstitle,review(rating of the place from 0 to 5, for example,4.5),review_count,position.top_ads— advertisement on the top. Containsdescription,title,url,domain.related_queries— similar queries people search for. Containstitle,position, and sometimesurl.questions— popular questions related to the current query. Each item usually containstextandposition, and may also containanswerwhen Google returns it.knowledge_graph— general information related to the query usually composed from resources like Wikipedia. Containstitle,type,images,website,description.map_results— contains information about locations relevant to the current query. Containsposition,title,address,rating,price(for example$for cheap,$$$for expensive). We will see how to fetch map results later in this article.

Here's an image showing where the mentioned results can be found on the actual Google search page:

Displaying scraped results

Okay, now let's try to display the fetched results in a more user-friendly way. Let's display some organic search results:

# ... other code ...

def print_organic_results(data: dict[str, Any]) -> None:

print("\n=== Organic search results ===")

for result in data.get("organic_results", []):

position = result.get("position", "N/A")

title = result.get("title", "No title")

url = result.get("url", "No URL")

description = result.get("description", "No description")

print(f"\n{position}. {title}")

print(url)

print(description)

You can also provide the page param to work with pagination.

Next, display local search results:

def print_local_results(data: dict[str, Any]) -> None:

print("\n\n=== Local search results ===")

for result in data.get("local_results", []):

position = result.get("position", "N/A")

title = result.get("title", "No title")

review = result.get("review")

review_count = result.get("review_count")

print(f"\n{position}. {title}")

if review is not None and review_count is not None:

print(f"Rating: {review} (based on {review_count} reviews)")

elif review is not None:

print(f"Rating: {review}")

else:

print("Rating: N/A")

Let's now list the related queries:

def print_related_queries(data: dict[str, Any]) -> None:

print("\n\n=== Related queries ===")

queries = data.get("related_queries", [])

if not queries:

print("No related queries found.")

return

for result in queries:

position = result.get("position")

title = result.get("title")

if position is not None:

print(f"\n{position}. {title}")

else:

print(f"\n- {title}")

And finally some relevant questions:

def print_questions(data: dict[str, Any]) -> None:

print("\n\n=== Relevant questions ===")

for result in data.get("questions", []):

position = result.get("position", "N/A")

question = result.get("text", "No question")

answer = result.get("answer")

print(f"\n{position}. {question}")

if answer:

print(f"Answer: {answer}")

else:

print("Answer: Not shown in the response")

Now call everything:

if __name__ == "__main__":

api_key = get_api_key()

query = "pizza new-york"

response = fetch_google_results(api_key, query)

data = response.json()

print("Response HTTP Status Code:", response.status_code)

print_organic_results(data)

print_local_results(data)

print_related_queries(data)

print_questions(data)

Great job!

Monitoring and comparing website positions

Of course that's not all as you can now use the returned data to perform custom analysis. For example, let's suppose we have a list of domains that we would like to monitor search results positions for. Specifically, I'd like my script to say "this domain previously had position X but its current position is Y".

Let's also suppose we have historical data stored in a regular CSV file therefore create a new file google_scraper/data.csv:

the-carboholic.com,reddit.com,yelp.com

2,3,4

Here we are storing previous positions for three domains. Make sure to add a newline at the end of the file because we will append to it later.

Now let's create a new Python script at google_scraper/scraping_bee_comparison.py. First, let's add the imports and a few constants:

import csv

import os

from pathlib import Path

from typing import Any

import requests

from dotenv import load_dotenv

API_URL: str = "https://app.scrapingbee.com/api/v1/google"

SEARCH_QUERY: str = "pizza new-york"

DATA_FILE: Path = Path(__file__).with_name("data.csv")

We'll also read the ScrapingBee API key from the .env file, just like before:

def get_api_key() -> str:

load_dotenv()

api_key = os.getenv("SCRAPINGBEE_API_KEY")

if not api_key:

raise ValueError("SCRAPINGBEE_API_KEY not found in .env file")

return api_key

Your .env file should look like this:

SCRAPINGBEE_API_KEY=your_api_key_here

Next, let's create a function that fetches Google results from ScrapingBee:

def fetch_google_results(api_key: str, query: str) -> dict[str, Any]:

response = requests.get(

url=API_URL,

params={

"api_key": api_key,

"search": query,

},

timeout=10,

)

response.raise_for_status()

return response.json()

Now let's read our CSV file. The domain names are stored in the CSV header, so we don't need to duplicate them in the Python code:

def read_position_history(csv_path: Path) -> tuple[list[str], list[dict[str, str]]]:

with csv_path.open(newline="", encoding="utf-8") as csvfile:

reader = csv.DictReader(csvfile)

if not reader.fieldnames:

raise ValueError("CSV file must contain a header row with domains")

return reader.fieldnames, list(reader)

This function returns two things:

- a list of monitored domains

- a list of historical rows

Next, let's create a tiny helper to normalize domains. Google results can contain www. in the domain name, while our CSV file may not:

def normalize_domain(domain: str) -> str:

return domain.removeprefix("www.")

Now we can find the current positions for our monitored domains:

def find_domain_positions(

data: dict[str, Any],

domains: list[str],

) -> dict[str, int]:

domain_positions = {domain: 0 for domain in domains}

monitored_domains = {

normalize_domain(domain): domain

for domain in domains

}

for result in data.get("organic_results", []):

raw_domain = result.get("domain")

position = result.get("position")

if not raw_domain or not isinstance(position, int):

continue

current_domain = normalize_domain(raw_domain)

original_domain = monitored_domains.get(current_domain)

if original_domain and domain_positions[original_domain] == 0:

domain_positions[original_domain] = position

return domain_positions

The default position is 0. It means that the domain was not found in the current organic results.

Also, the same domain can appear multiple times in Google results. Since organic results are already sorted by position, we only store the first match. In other words, we keep the highest ranking position.

Next, let's print a small report:

def format_position(position: int | str | None) -> str:

if position in (None, "", 0, "0"):

return "not found"

return str(position)

def get_position_change(previous: str | None, current: int) -> str:

if current == 0 and previous in (None, "", "0"):

return "still not found"

if not previous or previous == "0":

return "newly found"

if current == 0:

return "dropped out of current results"

previous_position = int(previous)

if current < previous_position:

return f"up by {previous_position - current} position(s)"

if current > previous_position:

return f"down by {current - previous_position} position(s)"

return "same position"

def print_domain_report(

domains: list[str],

current_positions: dict[str, int],

history: list[dict[str, str]],

) -> None:

print("\n=====")

print("Domain position report")

latest_history_row = history[-1] if history else {}

for domain in domains:

current_position = current_positions[domain]

previous_position = latest_history_row.get(domain)

historical_positions = [

row.get(domain, "N/A")

for row in history

]

print("\n---")

print(f"Domain: {domain}")

print(f"Previous position: {format_position(previous_position)}")

print(f"Current position: {format_position(current_position)}")

print(f"Change: {get_position_change(previous_position, current_position)}")

if historical_positions:

print(f"History: {', '.join(historical_positions)}")

else:

print("History: no historical records yet")

Finally, let's append the new positions to the CSV file:

def ensure_file_ends_with_newline(csv_path: Path) -> None:

if not csv_path.exists() or csv_path.stat().st_size == 0:

return

with csv_path.open("rb+") as file:

file.seek(-1, os.SEEK_END)

last_character = file.read(1)

if last_character != b"\n":

file.write(b"\n")

def append_domain_positions(

csv_path: Path,

domains: list[str],

positions: dict[str, int],

) -> None:

ensure_file_ends_with_newline(csv_path)

with csv_path.open("a", newline="", encoding="utf-8") as csvfile:

writer = csv.DictWriter(

csvfile,

fieldnames=domains,

lineterminator="\n",

)

writer.writerow(positions)

Now let's wire everything together:

def main() -> None:

api_key = get_api_key()

domains, history = read_position_history(DATA_FILE)

data = fetch_google_results(api_key, SEARCH_QUERY)

current_positions = find_domain_positions(data, domains)

print_domain_report(domains, current_positions, history)

append_domain_positions(DATA_FILE, domains, current_positions)

if __name__ == "__main__":

main()

That's it. Now the script fetches fresh Google results, checks positions for domains listed in your CSV file, compares them with the latest historical row, and appends the new data.

Run it:

uv run python google_scraper\scraping_bee_comparison.py

The nice part is that you don't need to worry about how Google results are fetched. You can focus on the actual analysis.

Displaying map results

You might also want to display results from Google Maps. Let's see how to do that.

Create a new Python script at google_scraper/scraping_bee_map.py. First, add the imports and constants:

import os

from typing import Any

import requests

from dotenv import load_dotenv

API_URL: str = "https://app.scrapingbee.com/api/v1/google"

SEARCH_QUERY: str = "pizza new-york"

We'll use the same .env approach to read the ScrapingBee API key:

def get_api_key() -> str:

load_dotenv()

api_key = os.getenv("SCRAPINGBEE_API_KEY")

if not api_key:

raise ValueError("SCRAPINGBEE_API_KEY not found in .env file")

return api_key

Now let's create a function that fetches Google Maps results. The important part here is "search_type": "maps":

def fetch_google_maps_results(api_key: str, query: str) -> dict[str, Any]:

response = requests.get(

url=API_URL,

params={

"api_key": api_key,

"search": query,

"search_type": "maps",

},

timeout=10,

)

response.raise_for_status()

return response.json()

Please note that in this case we set search_type to maps. Another supported type is news.

Now let's add a small helper function to display missing values nicely:

def format_value(value: Any) -> str:

if value in (None, "", []):

return "N/A"

if isinstance(value, list):

return ", ".join(str(item) for item in value)

return str(value)

Finally, let's print the map results:

def print_map_results(data: dict[str, Any]) -> None:

print("\nHere are the map results:")

map_results = data.get("map_results") or data.get("maps_results") or []

if not map_results:

print("No map results found.")

return

for result in map_results:

position = format_value(result.get("position"))

title = format_value(result.get("title"))

address = format_value(result.get("address"))

opening_hours = format_value(result.get("opening_hours"))

link = format_value(result.get("link"))

price = format_value(result.get("price"))

rating = format_value(result.get("rating"))

reviews = format_value(result.get("reviews"))

print(f"\n{position}. {title}")

print(f"Address: {address}")

print(f"Opening hours: {opening_hours}")

print(f"Link: {link}")

print(f"Price: {price}")

print(f"Rating: {rating}, based on {reviews} reviews")

Now let's wire everything together:

def main() -> None:

api_key = get_api_key()

data = fetch_google_maps_results(api_key, SEARCH_QUERY)

print_map_results(data)

if __name__ == "__main__":

main()

This is it. Now the script fetches Google Maps results and displays local businesses with their address, opening hours, price, rating, and link. You can run it with:

uv run python google_scraper\scraping_bee_map.py

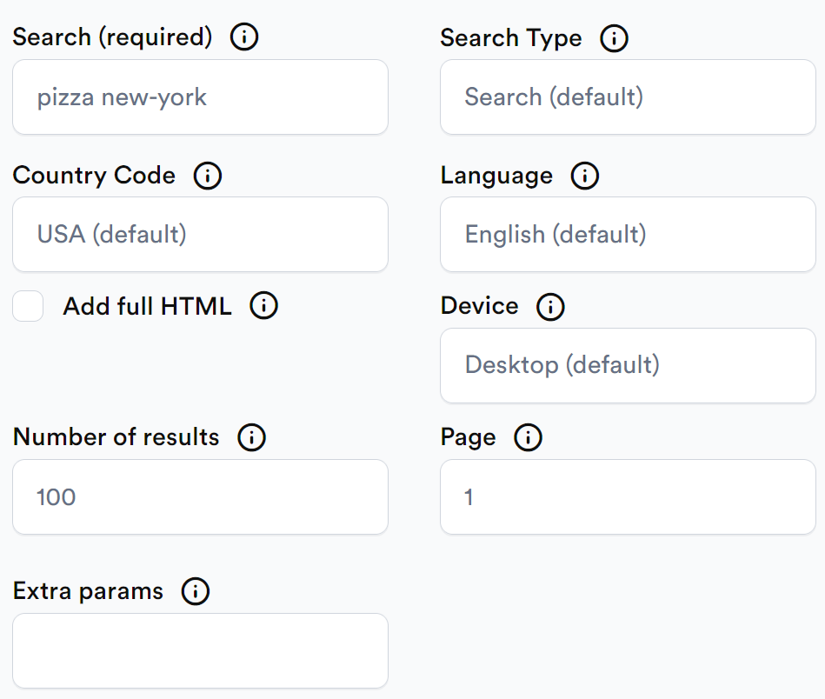

Scraping Google search results with our API builder

If you are not a developer but would like to scrape some data, ScrapingBee is here to help you!

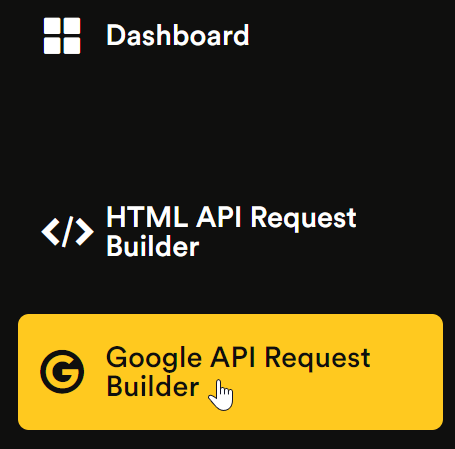

If you haven't already done so, register for free and then proceed to the Google API Request Builder:

Adjust the following options:

- Search — the actual search query.

- Country code — the country code from which you would like the request to come from. Defaults to USA.

- Number of results — the number of search results to return. Defaults to 100.

- Search type — defaults to "classic", which is basically what you are doing when entering a search term on Google without any additional configuration. Other supported values include "maps" and "news". If you specifically need job listings from Google’s job search interface, you can also use our Google Jobs Scraper API.

- Language — the language to display results in. Defaults to English.

- Device — defaults to "desktop" but can also be "mobile".

- Page — defaults to 1. Use 2 for the second page, 3 for the third page, and so on.

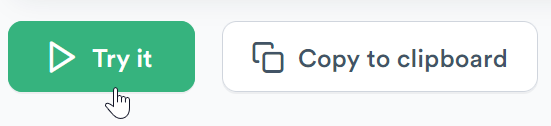

Once you are ready, click Try it:

Wait for a few moments, and the scraping results will be provided for you:

That's it, you can now use these results for your own purposes!

How to scrape Google search results DIY with just Python

Now let's discuss the process of scraping Google search results without any third-party services, only by using our faithful Python.

Preparations

To achieve that, you'll need to install a library called BeautifulSoup. No, I'm not pulling your leg, this is a real library enabling us to easily parse HTML content. Install it:

uv add requests beautifulsoup4

Now let's create a new file google_scraper/scrape_diy.py. Start with the imports:

from dataclasses import dataclass

from urllib.parse import parse_qs, urljoin, urlparse

import requests

from bs4 import BeautifulSoup

from bs4.element import Tag

We'll also define a tiny data class to store each search result:

@dataclass(frozen=True)

class SearchResult:

position: int

title: str

link: str

Sending request

Let's suppose that we would like to scrape information about... well, web scraping, for instance. I mean, why not?

Add a few constants:

GOOGLE_SEARCH_URL: str = "https://www.google.com/search"

SEARCH_QUERY: str = "web scraping"

Now you could try sending a request to Google right away but most likely you won't get the response you're expecting. The lack of outcome is indeed an outcome by itself but still.

The problem is that Google will most likely present a cookie consent screen to you asking to accept or reject cookies. But unfortunately we can't really accept anything in this case because our script literally has no hands to move the mouse pointer and click buttons, duh.

Actually, this is a problem on its own but there's one solution: craft a cookie when making a request so that Google "thinks" you've already seen the consent screen and accepted everything. That's right: send a cookie that says you've accepted cookies.

CONSENT_COOKIE: str = "YES+cb.20220419-08-p0.cs+FX+111"

One important note though: this value is just an example. The CONSENT cookie is not something you should blindly copy and expect to work forever. Google may use different consent values depending on your region, language, account state, browser session, or whatever experiment is currently running.

So realistically, you will probably need to open Google in your own browser, accept or configure the cookie banner manually, then check the actual CONSENT cookie value in Developer Tools and use the value you see there. Even then, this trick is not 100% reliable. Google may still show a consent page, return a fallback page, change the expected cookie format, or handle your request differently depending on where it comes from.

Also let's prepare a fake user agent (feel free to modify it):

USER_AGENT: str = (

"Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:109.0) "

"Gecko/20100101 Firefox/118.0"

)

Add the following function:

def fetch_google_html(query: str) -> str:

response = requests.get(

GOOGLE_SEARCH_URL,

params={

"q": query,

"hl": "en",

"gl": "us",

},

headers={

"User-Agent": USER_AGENT,

},

cookies={

"CONSENT": CONSENT_COOKIE,

},

timeout=10,

)

response.raise_for_status()

return response.text

The hl and gl parameters are not required, but they make the response a bit more predictable:

hl=enasks Google to return results in Englishgl=usasks Google to use the US as the target country

Parsing HTML

Now we can parse the returned HTML with BeautifulSoup:

def create_soup(html: str) -> BeautifulSoup:

return BeautifulSoup(html, "html.parser")

Google usually places organic search result titles inside h3 elements. So we can select all h3 tags inside the #search block:

def find_result_headings(soup: BeautifulSoup) -> list[Tag]:

headings = soup.select("#search h3")

return [

heading

for heading in headings

if isinstance(heading, Tag)

]

This gives us a list of possible result titles.

Nice! Now you can simply iterate over the found headers.

Extracting links

Unfortunately, in some cases the header does not really belong to the search result thus let's check if the header's parent contains an href (in other words, its parent is a link).

Google can return links in different formats. Sometimes the link is direct:

https://example.com/page

Sometimes it's a Google redirect link:

/url?q=https://example.com/page&sa=...

Let's handle both cases:

def clean_google_link(raw_link: str) -> str:

if raw_link.startswith("/url?"):

parsed_url = urlparse(raw_link)

query_params = parse_qs(parsed_url.query)

if "q" in query_params:

return query_params["q"][0]

return urljoin("https://www.google.com", raw_link)

Now let's turn the headings into structured search results:

def parse_search_results(soup: BeautifulSoup) -> list[SearchResult]:

results: list[SearchResult] = []

seen_links: set[str] = set()

for heading in find_result_headings(soup):

link_tag = heading.find_parent("a", href=True)

if not isinstance(link_tag, Tag):

continue

raw_link = link_tag.get("href")

if not isinstance(raw_link, str):

continue

title = heading.get_text(strip=True)

link = clean_google_link(raw_link)

if not title or not link.startswith("http"):

continue

if link in seen_links:

continue

seen_links.add(link)

results.append(

SearchResult(

position=len(results) + 1,

title=title,

link=link,

)

)

return results

Here we do a few things:

- find the nearest parent link for each

h3 - extract the title

- clean Google redirect URLs

- skip duplicate links

- assign positions based on the order of results

Displaying the results

Finally, let's print the results:

def print_search_results(results: list[SearchResult]) -> None:

if not results:

print("No search results found.")

return

print("\nSearch results:")

for result in results:

print(f"\n{result.position}. {result.title}")

print(result.link)

Now let's wire everything together:

def main() -> None:

html = fetch_google_html(SEARCH_QUERY)

soup = create_soup(html)

results = parse_search_results(soup)

print_search_results(results)

if __name__ == "__main__":

main()

And that's it — well, at least in theory.

This tiny script sends a request to Google, parses the returned HTML, finds result titles, extracts links, and prints them in the terminal. For a small demo, that's pretty neat. You get to see how search result scraping works under the hood: request the page, parse the HTML, grab the useful bits.

But here comes the less fun part: DIY Google scraping gets super problematic really fast. In many cases, Google will not return the regular search results page you were expecting. Instead, it might respond with a tiny fallback page saying something like:

If you're having trouble accessing Google Search, please click here

That is not really a BeautifulSoup bug, and the parser did not suddenly forget how HTML works. Google simply didn't give you the SERP HTML you wanted. And there are plenty of reasons why that can happen:

- You may get a cookie consent page instead of search results.

- The response may be a bot-detection page.

- The HTML can vary by region, language, device, or session.

- The page markup can change without warning.

- A selector that works today may return nothing tomorrow.

- A request that works once on your laptop may fail after too many repeats.

You can try changing headers, cookies, user agents, language parameters, or region parameters. Sometimes that helps. Sometimes it does absolutely nothing.

The annoying part is that none of this is a stable contract. You are parsing a consumer-facing web page, not using an API that promises to keep its response format predictable.

Can a headless browser help?

Yeah, it can help a bit. Tools like Playwright, Puppeteer, or Selenium can execute JavaScript, store cookies, follow redirects, and behave more like a real browser than a plain requests.get() call. That can make a difference when Google expects browser-like behavior.

So if the problem is a cookie banner, JavaScript, or some browser-dependent page behavior, a headless browser may get you further than plain requests. But it is still not magic.

A headless browser is heavier, slower, and more expensive to run. Spinning up Chromium for every search request is a very different game from sending a simple HTTP request.

And more importantly, Google can still detect suspicious automation patterns. It can look at request frequency, sessions, IP reputation, repeated queries, datacenter traffic, unusual browsing behavior, and other signals that have nothing to do with whether the script can run JavaScript.

So, headless browsing can improve your chances for small experiments, but for production scraping, it is still fragile.

Quick note: Google treats automated queries to Search, including scraping results for rank checking, as machine-generated traffic. So before building anything serious around Google SERP data, make sure your use case, tooling, and data handling are compliant with the relevant terms and laws.

Why not just use Google's official API?

In a perfect world, this would be the obvious answer: use the official Google Search API and avoid the whole scraping headache. But the official route is not always that simple.

Google's Custom Search JSON API is closed to new customers, and existing customers have to move to another solution by January 1, 2027. Google currently points new users toward Vertex AI Search for site-restricted search across up to 50 domains, or asks full-web-search use cases to contact them directly.

So if you require broad Google Search result data, the official option may not be available, flexible, or practical enough for your use case.

That leaves us in a weird spot. The full DIY method is great for learning. It shows the basic mechanics: send a request, parse the HTML, extract titles and links. But as a production setup, it is not very reliable.

If you need stable Google Search data, it is usually better to use a scraping API that can handle the painful parts for you: browser rendering, JavaScript, retries, localization, blocks, and all the tiny failures that make DIY Google scraping annoying.

Using ScrapingBee to send requests

Actually, that's where ScrapingBee can also assist because we provide a dedicated Python client to send HTTP requests. This client enables you to use proxies, make screenshots of the HTML pages, adjust cookies, headers, and more.

To get started, install the client by running uv add scrapingbee.

Now create a new file at google_scraper/scrape_diy_with_scrapingbee.py. First, add the imports:

import os

from dataclasses import dataclass

from pathlib import Path

from urllib.parse import parse_qs, urljoin, urlparse

from requests import Response

from bs4 import BeautifulSoup

from bs4.element import Tag

from dotenv import load_dotenv

from scrapingbee import ScrapingBeeClient

Next, provide a few constants:

TARGET_URL: str = "https://www.google.com/search?q=web+scraping&hl=en&gl=us"

SCREENSHOT_FILE: Path = Path("google_search_screenshot.png")

HTML_FILE: Path = Path("google_search_rendered.html")

ERROR_FILE: Path = Path("scrapingbee_error.html")

We'll read the API key from the .env file, same as before:

def get_api_key() -> str:

load_dotenv()

api_key = os.getenv("SCRAPINGBEE_API_KEY")

if not api_key:

raise ValueError("SCRAPINGBEE_API_KEY not found in .env file")

return api_key

Your .env file should look like this:

SCRAPINGBEE_API_KEY=your_api_key_here

Now let's create the ScrapingBee client:

def create_client(api_key: str) -> ScrapingBeeClient:

return ScrapingBeeClient(api_key=api_key)

Now we can fetch the rendered Google page:

def fetch_rendered_google_html(

client: ScrapingBeeClient,

url: str,

) -> str:

response = client.get(

url,

params={

"custom_google": "true",

"render_js": "true",

"wait_browser": "load",

"block_resources": "false",

"premium_proxy": "true",

},

retries=3,

)

if not response.ok:

save_response_debug(response, ERROR_FILE)

response.raise_for_status()

return response.text

A couple of params are important here:

"custom_google": "true"tells ScrapingBee that we're working with Google and need special handling. For structured SERP data, the dedicated Google API is still the easiest option."render_js": "true"renders the page in a browser instead of returning raw, unfinished HTML."wait_browser": "load"waits until the browser fires theloadevent. This is more reliable than waiting for a fixed number of milliseconds."block_resources": "false"keeps images, CSS, and other resources enabled. For Google, blocking them may break the rendered page."premium_proxy": "true"is often needed because Google tends to return CAPTCHAs or fallback pages when using standard proxies. If premium proxies still don't work, you may need to usestealth_proxy=true. Yes it's more expensive but sometimes that's the only mode that gets things done.

Let's also add a function that saves the rendered HTML to a local file. This is handy for debugging:

def save_html(html: str, output_path: Path) -> None:

output_path.write_text(html, encoding="utf-8")

print(f"Saved rendered HTML to {output_path}")

Add a debug function in case something goes wrong (unfortunately, something will probably go wrong initially):

def save_response_debug(response: Response, output_path: Path) -> None:

output_path.write_bytes(response.content)

print("\nScrapingBee request failed.")

print(f"Status code: {response.status_code}")

print(f"Saved response body to {output_path}")

print("Open that file to see what ScrapingBee/Google returned.")

Now let's ask ScrapingBee to take a full-page screenshot using the screenshot param. Please note that screenshot=True does not magically create a file in your current directory. The screenshot is returned as bytes in response.content, so we need to save it ourselves:

def save_full_page_screenshot(

client: ScrapingBeeClient,

url: str,

output_path: Path,

) -> None:

response = client.get(

url,

params={

"custom_google": "true",

"render_js": "true",

"wait_browser": "networkidle2",

"screenshot": "true",

"screenshot_full_page": "true",

"block_resources": "false",

"premium_proxy": "true",

},

retries=3,

)

if not response.ok:

save_response_debug(response, ERROR_FILE)

response.raise_for_status()

output_path.write_bytes(response.content)

print(f"Saved screenshot to {output_path}")

Of course, we can still parse the rendered HTML with BeautifulSoup.

Let's reuse the same small data class from the DIY example:

@dataclass(frozen=True)

class SearchResult:

position: int

title: str

link: str

Now add a helper to clean Google redirect links:

def clean_google_link(raw_link: str) -> str:

if raw_link.startswith("/url?"):

parsed_url = urlparse(raw_link)

query_params = parse_qs(parsed_url.query)

if "q" in query_params:

return query_params["q"][0]

return urljoin("https://www.google.com", raw_link)

We should also skip internal Google links:

def is_google_internal_link(link: str) -> bool:

parsed_url = urlparse(link)

if not parsed_url.netloc:

return True

return "google." in parsed_url.netloc

Now let's parse the search results from the rendered HTML:

def parse_search_results(html: str) -> list[SearchResult]:

soup = BeautifulSoup(html, "html.parser")

results: list[SearchResult] = []

seen_links: set[str] = set()

for link_tag in soup.find_all("a", href=True):

if not isinstance(link_tag, Tag):

continue

heading = link_tag.find("h3")

if not isinstance(heading, Tag):

continue

raw_link = link_tag.get("href")

if not isinstance(raw_link, str):

continue

title = heading.get_text(strip=True)

link = clean_google_link(raw_link)

if not title or not link.startswith("http"):

continue

if is_google_internal_link(link):

continue

if link in seen_links:

continue

seen_links.add(link)

results.append(

SearchResult(

position=len(results) + 1,

title=title,

link=link,

)

)

return results

Finally, let's display the parsed results:

def print_search_results(results: list[SearchResult]) -> None:

if not results:

print("No search results found in the rendered HTML.")

return

print("\nSearch results:")

for result in results:

print(f"\n{result.position}. {result.title}")

print(result.link)

Now wire everything together:

def main() -> None:

api_key = get_api_key()

client = create_client(api_key)

html = fetch_rendered_google_html(client, TARGET_URL)

save_html(html, HTML_FILE)

results = parse_search_results(html)

print_search_results(results)

save_full_page_screenshot(client, TARGET_URL, SCREENSHOT_FILE)

if __name__ == "__main__":

main()

Run it:

uv run python google_scraper/scrape_diy_with_scrapingbee.py

After the script runs, you should get:

- parsed search results printed in the terminal

google_search_rendered.htmlwith the rendered HTMLgoogle_search_screenshot.pngwith a full-page screenshot

Pretty neat. The parser can still break if Google changes its markup, but at least now we're not stuck at the "Google returned a weird fallback page with zero results" stage.

💡Interested in analysing PPC competitors to see what they're ranking for and how their ad copy changes? Check out our guide on how to build a Google Ads Competitor Monitoring System

Conclusion

In this article we have discussed how to scrape Google search results with Python. We have seen how to use ScrapingBee for the task and how to write a custom solution. Please find the source code for this article on GitHub.

Thank you for your attention, and happy scraping!

Before you go, check out these related reads:

Ilya is an IT tutor and author, web developer, and ex-Microsoft/Cisco specialist. His primary programming languages are Ruby, JavaScript, Python, and Elixir. He enjoys coding, teaching people and learning new things. In his free time he writes educational posts, participates in OpenSource projects, tweets, goes in for sports and plays music.