There are many ways that businesses and individuals can gather information about their customers and web crawling and web scraping are some of the most common approaches. You'll hear these terms used interchangeably, but they are not the same thing.

In this article, we'll go over the differences between web scraping and web crawling and how they relate to each other. We will also cover some use cases for both approaches and tools you can use.

What is web scraping

A basic explanation of web scraping is that it refers to extracting data from a website. Any relevant data is then collected and exported to a different format. Some users will put the scraped information into a spreadsheet, a database, or do further processing with an API.

Web scraping isn't always a simple task. The ability to scrape a website for useful data is highly dependent on the shape of the content on a website. If there are JavaScript rendered pages, images, or other formats on the site, it will be more complex to get the data from them. The other challenge is that websites are often updated, and your scraper will break.

Approaches to web scraping

There are different methods you can use to approach web scraping. You can start web scraping manually if you are looking for a small amount of information from a few URLs. This means you'll go through each page and get the data you're looking for. This could be price information from a particular website or finding addresses from an online directory.

You also have the option of using automated web scrapers. There are a number of web scraping tools available. Here's a short list, but there are more included in the link:

You can also create your own custom automated web scrapers if you have some programming knowledge. This will give you more control over what data you extract from websites, but it can take a considerable amount of time.

Web scrapers give you the ability to automate data extraction from multiple websites simultaneously. As long as you have a list of websites that you want to scrape for data and you know the data you are looking for, this is an invaluable data collection tool. You'll be able to gather information from multiple sources accurately and quickly.

How web scrapers work

The way web scrapers work is by taking a list of URLs and loading all of the HTML code for the web pages. If you're using a more advanced scraper, it will render an entire website including the CSS and JavaScript on the pages. Then the scraper will gather all of the data on the page or a specific type of data you've defined.

If you want your scraper to work quickly and efficiently, defining the data you're looking for before starting the web scraping process will be the best approach. For example, if you know you want to get pricing data for a specific product on Amazon and you don't want the reviews, defining that beforehand will save a lot of time and resources.

Once the web scraper has all of the data that you want to collect, it will put that data into a format that you choose. Most users output data into a CSV file or an Excel spreadsheet. Others give you more advanced options, like returning a JSON object which can be used in API calls for further processing.

Use cases for web scraping

Most of the use cases for web scraping are in a business context. A company might want to check what products its competitors are selling and the prices they are selling them at. They might also want to check websites for any mentions of them or to find data that will help with their SEO strategy.

Here are a few examples of how businesses use web scraping:

- News aggregation to check for company mentions across multiple platforms

- E-commerce monitoring to see how competitors are doing

- Hotel and flight comparators to see how market prices are fluctuating

- Market research for new products

- Lead generation by gathering user information

- Bank account aggregation, like websites like plaid.com or Mint.com

- Data journalism to tell stories through infographics

More on data scraping

Another thing to keep in mind is that scraping for data doesn't have to be entirely online. It's possible to scrape PDFs, images, and other offline documents as well. This usually falls under the category of data scraping. The key difference between web scraping and data scraping is that web scraping happens exclusively online. It's like a subset of data scraping, which can happen online or offline.

There are a lot of OCR (optical character recognition) tools that will help you extract that data from these offline documents like:

What is web crawling

Web crawling is the process of indexing content from all over the internet. It's like if someone went through a large music collection and organized it alphabetically so that people can find the songs they want. That way they can find the exact song they are looking for at any time. Web crawlers take a jumbled mix of information and organize it.

You'll also hear web crawlers referred to as web spiders or spider bots. You might not know all of the pages that a website has available until you use a bot. This is how you can discover new information exists on a site. They let you know what content is available and where it is located, but they don't actually gather information for you.

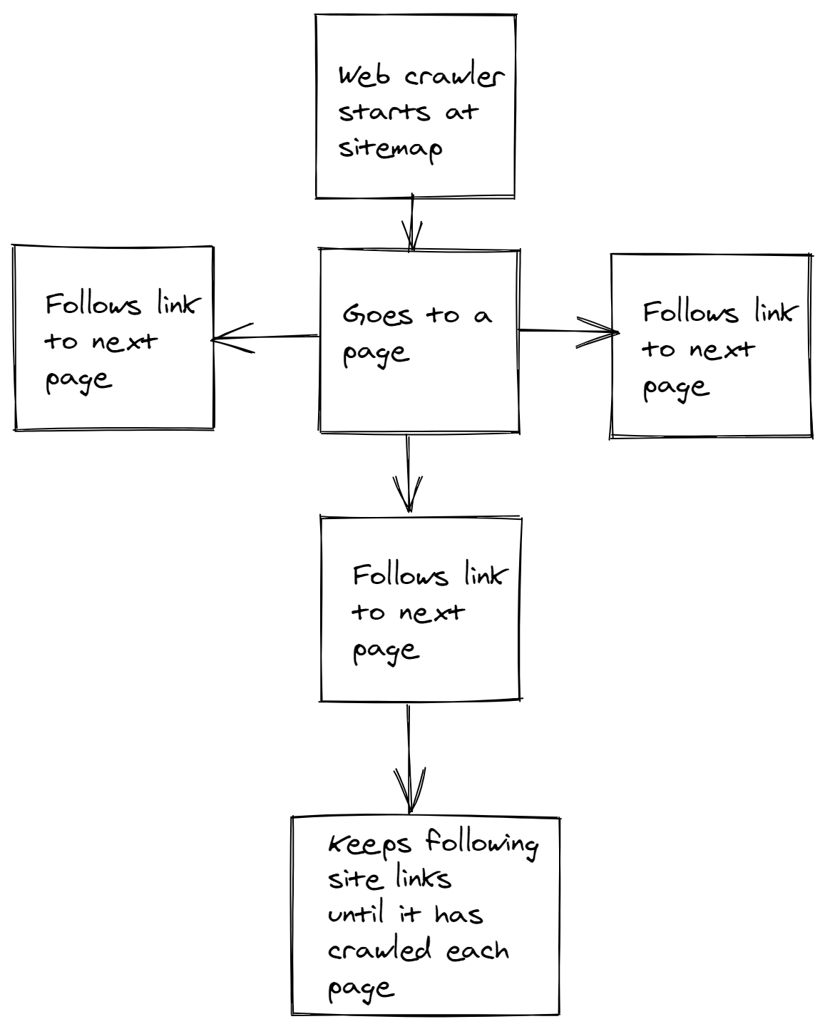

This is the way search engines like Google work. They use a web crawling bot to follow links and sort through information. Web crawlers work by going through a website's sitemap to discover what information a website contains or starting at an initial page and finding other pages linked to it.

How web crawlers work

To start, web crawlers need an initial starting point which is typically a link to the page on a specific website. Once it has that initial link, it will start going through any other links on that page. As it goes through different links, it will create its own map once it understands the type of content on each page.

Site maps are also a great starting point for web crawlers. It gives them a way to see exactly how a website's content is organized and its internal linking strategy. This is an especially powerful starting point for large websites, sites with pages that aren't well linked to each other, new sites that have few external links, or sites that have a lot of rich media link images or videos.

Most sites try to optimize their crawlability for SEO purposes. If the content of a website is easily discoverable by web crawlers, they are likely to rank higher in search engine results because the content they have is easier to find. There are a few ways that web crawling can be performed.

Web crawling can be done manually by going through all of the links on multiple websites and taking notes about which pages contain information relevant to your search. It's more common to use an automated tool to do this though.

Web crawling tools

You can find options for both free and paid web crawling tools and if you have some programming skills, you could even make your own web crawler. Here are a few of some commonly used automated web crawling tools.

- Scrapy This is also a web scraping tool mentioned in the previous

- Apache Nutch

- Storm Crawler

- Screaming Frog

These tools let you automate your web crawling activities, allowing you to scan thousands of websites for content that may be useful to you. They go deeper into a website than a manual scan would allow because they find links and pages that might not be listed in easily accessible areas of a site.

While Python is the standard language used to build web crawlers, you can also use other languages like JavaScript or Java to write your own custom web crawler. Now that you are familiar with some of the tools you can use to crawl websites, let's go over a few use cases.

Use cases for web crawling

The most common use of web crawlers is for search engines, like Google, Bing, or DuckDuckGo, to find and index information for users to search through. A search engine like Google will use web crawlers to index sites based on the content they have available for bots to look through. When they find websites that contain information relevant to a particular subject, the bot will make a note of that site and give it a ranking in a user's search results accordingly.

There are plenty of other reasons you would want to use a web crawler. Here are a few examples.

- SEO analytics tools that marketers use for researching keywords and finding competitors, like Ahrefs or Moz

- On-page SEO analysis to find common errors on websites, like pages that return

404or500errors - Price monitoring tools to find product pages

- Do collaborative research in academia with a tool like Common Crawl

More on web crawlers

Unlike web scraping, offline data crawling isn't as popular. You can search through documents and images available to you, but that data is usually already labeled as relevant or irrelevant to your research because you have local access to it. You aren't necessarily finding new content by doing a crawl on your own computer.

One thing you should be aware of with web crawlers is that some websites may not want bots searching through their pages. Some sites will block certain web crawlers using a robots.txt file. This can prevent specific crawling agents from indexing a site's pages, but they don't prevent content from being indexed by search engines.

Final thoughts

A big reason for the confusion between web scraping and web crawling is that they are commonly done together. Typically when a business is trying to gather information from other websites, they'll want to crawl the pages and extract information from the pages' content as they go.

Web crawlers are also useful for de-duplicating data. For example, many people post articles and products across different sites. A web crawler will be able to identify the duplicate data and not index it again. This will save you time and resources when you're ready to perform web scraping. You'll only have one copy of all the useful data you find.

Web scraping is for more targeted research when you have already performed web crawling to identify the websites that have the information you need. Creating a list of relevant websites with your web crawling will save you time and money because you won't have to scrape information from sites that don't have the data you're interested in.

When thinking about using web crawling and web scraping together, you can create a completely automated process. You can generate a list of links through API calls and store them in a format that your web scraper can use to extract data from those particular pages. Once you have a system like this in place, you can get data from all over the internet without having to do much manual work.

An example of this would be an automated crawler that scans new products added to an e-commerce site. Then for each new product, a scraper is used to extract the new product’s data, like the price, images, product code, or description.

Here's a table highlighting the main differences between web scraping and web crawling.

| Web Scraping | Web Crawling |

|---|---|

| Extracts information from the contents of a page | Indexes pages based on their content |

| Does not index the content across multiple pages | Does not extract content from any page it indexes |

Sometimes questions about the legality of web crawling and web scraping come up. In general, both of them are completely legal. You can crawl and scrape your own websites with no problems. The grey area comes in with how you are using the data and whether or not you have permission to access the data on certain websites.

Before you go, check out these related reads:

Kevin worked in the web scraping industry for 10 years before co-founding ScrapingBee. He is also the author of the Java Web Scraping Handbook.