JavaScript is everywhere these days, and web scraping in Node.js and JavaScript has become way easier thanks to how far the whole ecosystem has come. With Node giving JS a fast, server-side runtime, you can pull data from websites just as easily as you build web or mobile apps.

In this article, we'll walk through how the Node.js toolbox lets you scrape the web efficiently and handle most real-world scraping needs without breaking a sweat.

Quick answer (TL;DR)

The fastest way to get started with web scraping in Node.js and JavaScript is: fetch the page HTML, parse it with Cheerio, grab the elements you care about using CSS selectors, and output them as JSON. You only need Node.js 20+ (for fetch) and one dependency.

- Install Cheerio:

npm install cheerio. - Make sure to use

"type": "module"in yourpackage.json. - Create

scrape.jswith the code below. - Run

node scrape.jsand read the JSON output.

import * as cheerio from 'cheerio';

const URL = 'https://quotes.toscrape.com/';

async function scrape() {

// Fetch the page to scrape

const res = await fetch(URL);

// Grab raw HTML

const html = await res.text();

// Parse with Cheerio

const $ = cheerio.load(html);

const quotes = [];

// Get each quote

$('.quote').each((_i, el) => {

// Get quote text and author

const text = $(el).find('.text').text().trim();

const author = $(el).find('.author').text().trim();

const tags = [];

// Get tags

$(el)

.find('.tags .tag')

.each((_j, tagEl) => tags.push($(tagEl).text().trim()));

// Add to the array

quotes.push({ text, author, tags });

});

console.log(JSON.stringify(quotes, null, 2));

}

await scrape();

This script fetches the HTML from quotes.toscrape.com, loads it into Cheerio, selects each quote block, and extracts the text, author, and tags into a clean JSON array.

It's the simplest form of web scraping in Node.js and JavaScript. Below, we'll dive into every tool and complexity behind it.

Introduction to web scraping with Node.js and JavaScript

Prerequisites

This post is mainly for developers who already have some JavaScript experience, but if you understand how web scraping works in general, you can still use this as a light intro to the JavaScript side of things. We'll be using Node.js 20+ (Node.js 24 is the current LTS as of November 2026), so being comfortable with modern JS helps.

- ✅ Some experience with JavaScript

- ✅ Knowing how to use browser DevTools to grab selectors

- ✅ A bit of ES6 knowledge (optional)

⭐ Check out the resources at the end of the article if you want to go deeper!

Outcomes

After reading this post you will be able to:

- Understand how Node.js fits into the scraping workflow

- Use different HTTP clients to make the scraping process easier

- Work with modern, battle-tested libraries to scrape the web

Why not scrape with frontend JavaScript?

A lot of folks try web scraping with JavaScript by popping open the browser console and running a few fetch calls. It works for tiny tests, but it falls apart fast. The browser is locked behind CORS and the same-origin policy, so most sites will just block your requests outright. You also can't automate anything properly: no loops over hundreds of pages, no scheduling, no clean way to rotate headers or proxies. And scaling? Forget it. Refreshing your browser 500 times isn't a strategy (probably).

That's why web scraping in Node.js and JavaScript is the real move. Node gives you a proper runtime with no CORS walls, real automation, proper libraries, and the ability to scale up without losing your mind.

If you want to see a more powerful approach, check out JavaScript web scraping API brought to you by ScrapingBee.

Understanding Node.js: A brief introduction

Before we dive into web scraping in Node.js and JavaScript, it helps to remember where all this madness actually started.

Back in the day, JavaScript lived only in the browser, doing tiny interactive party tricks like popping alerts, poking the DOM, adding a bit of HTML magic here and there. Browsers handed it a small playground with globals like document and window, and for a long time that was its entire kingdom. And yeah, I still remember it vividly... I was there, Gandalf. Three thousand years ago, when "advanced scripting" meant making a button wiggle or adding falling snow that murdered your CPU on sight.

Things changed in 2009 when Ryan Dahl introduced Node.js. He basically grabbed Chrome's JS engine and dropped it onto the server side. Outside the browser, JavaScript no longer had access to things like a page's DOM or cookie storage, but it gained something much more powerful: direct access to system resources. Suddenly it could read and write files, talk to databases, open raw network connections: all the stuff real backend tools need to do.

In short, Node.js turned JavaScript into a legit server-side language. Same syntax you already know, but without the sandbox and with a ton of new capabilities. And that's exactly why it works so well for web scraping with JavaScript — you finally get the freedom the browser wouldn't give you.

The JavaScript event loop

It's also worth understanding the little engine that makes async magic possible: the event loop. JavaScript has always been single-threaded, and instead of juggling a bunch of threads like other languages, it leans heavily on asynchronous operations and callbacks (or Promises nowadays). That's why so much of JavaScript web scraping feels natural: async is baked into the language itself.

A quick example

Here's a tiny web server to show how the event loop behaves:

import http from 'http';

const PORT = 3000;

const server = http.createServer((req, res) => {

res.statusCode = 200;

res.setHeader('Content-Type', 'text/plain');

res.end('Hello World');

});

server.listen(PORT, () => {

console.log(`Server running at http://localhost:${PORT}/`);

});

We import the http module, create a server with a request-handler callback, and start listening on port 3000. Nothing wild, but the important bits are already hiding in plain sight:

- The function passed to

createServeris a callback triggered by incoming requests. listendoesn't block anything. It returns instantly.

Most languages would block on something like accept() until a new client connects, forcing you into threads or async frameworks. Node.js, on the other hand, stays single-threaded and lets the event loop orchestrate all callbacks behind the scenes.

Why this matters

listen returns immediately, but your script doesn't exit. Why? Because the event loop knows there's a registered callback (your request handler). As long as something is "on the hook," Node.js stays alive. When a client connects, Node.js parses the request in the background and calls your handler without spinning up new threads or making you micromanage concurrency.

The only rule: don't block. As long as you don't do anything cursed like:

while (true) {}

...you're good. Most APIs in Node.js are already async anyway, so it's pretty hard to accidentally freeze the event loop unless you try.

Try it yourself

Save the snippet above as MyServer.js and run:

node MyServer.js

Then open localhost:3000 — and boom, "Hello World." That was easy, wasn't it?

But what about performance?

People often assume "single-threaded" means slow. In reality, for I/O-heavy tasks (which includes a ton of web scraping with JavaScript), the async model is insanely efficient. No need to pre-allocate threads or manage pools as Node.js just runs lightweight callbacks as events come in.

All right, that's enough theory for now! You came here for web scraping with Node.js and JavaScript, so let's get down to business.

Setting up a Node.js scraping project

Time to get our hands dirty. Before we build anything fancy, let's set up a tiny "hello world" scraper so you can learn web scraping with Node.js in the most painless way possible. Since we're on Node.js 20+, we can utilize the built-in fetch API to send HTTP requests. I don't really like introducing dependencies that are not mandatory.

Let's make a simple Node.js web scraper that grabs the front page titles from Hacker News.

1. Initialize the project

Create a new folder, jump into it, and initialize your project:

mkdir node-scraping-demo

cd node-scraping-demo

npm init -y

This creates a package.json with zero questions asked, nice for quick scraping experiments.

Before moving on, make sure to open package.json and replace the "type": "commonjs", line with:

"type": "module",

This way you'll be able to use import like a boss.

2. Install Cheerio

We'll use Cheerio to parse the HTML. It's lightweight, fast, and plays well with fetch.

npm install cheerio

Learn how to use Cheerio for web scraping in our tutorial.

3. Create a scraper file

Make a file in the project root called scrape.js and drop this in:

import * as cheerio from 'cheerio';

const URL = 'https://news.ycombinator.com';

async function run() {

const res = await fetch(URL);

const html = await res.text();

const $ = cheerio.load(html);

const titles = [];

$('.athing .titleline > a').each((_i, el) => {

titles.push($(el).text());

});

console.log('Top HN titles:');

console.log(titles);

}

try {

await run();

} catch (e) {

console.error('Scraper failed:', e);

}

Key points to note:

import * as cheerio from 'cheerio';— we pull in Cheerio (which is basically jQuery for server-side scraping). Makes it easy to pick elements from the HTML.const res = await fetch(URL);— fetching the page with Node's built-in fetch. No extra libraries, no overhead. It's async because the request takes time.const html = await res.text();— turning the response into raw HTML so Cheerio can work with it.const $ = cheerio.load(html);— loading the HTML into Cheerio gives us the$function. Now we can query the page easily.$('.athing .titleline > a').each((_i, el) => { ... })— we select only the main link inside each Hacker News story. The> apart avoids grabbing the domain link. Then we loop through them.titles.push($(el).text());— grab the text of each title and toss it into our array.console.log(titles);— print the scraped titles. The moment of truth.

4. Run it

Just hit:

node scrape.js

You'll see something like this:

Top HN titles:

[

'Voyager 1 Is About to Reach One Light-Day from Earth',

'OpenAI needs to raise at least $207B by 2030 so it can continue to lose money',

`I don't care how well your "AI" works`,

'A cell so minimal that it challenges definitions of life',

...

]

Great, you just built a working web scraping in Node.js example that fetches and parses real data.

Why this setup?

In fact, you don't need a huge stack to get started. For many projects, fetch and Cheerio is all you need to prototype, test ideas, or build quick tools. It's beginner-friendly but also powerful enough for a ton of real scraping tasks. If you read the prerequisites above, you're already more than prepared for this.

HTTP clients: Querying the web

An HTTP client is basically the tool that sends a request to a server and waits for whatever comes back. Every scraping setup (no matter how fancy) sits on top of an HTTP client. If you're doing web scraping in Node.js, you're going to hit the web a lot, so it's worth knowing your options.

1. Built-In HTTP client

Node.js ships with its own HTTP module, and yeah, it works. It can send requests, receive responses, and it's always available with zero installs. But it's also... kinda barebones. Think "minimum viable HTTP client," not "nice developer experience."

Example:

import http from 'http';

const req = http.request('http://example.com', res => {

const data = [];

res.on('data', chunk => data.push(chunk));

res.on('end', () => console.log(data.join('')));

});

req.end();

This does the job, but as you can see, you're dealing with chunks, streams, manual stitching, and callbacks. And want HTTPS? Congrats — that's a different module entirely. So, it's cool that Node gives this to you out of the box, but you'll probably outgrow it fast once you start doing real scraping work.

Time to look at nicer tools.

2. Fetch API

Another built-in option is the Fetch API. Browsers had it forever, but Node.js only caught up in v18. Honestly, better late than never. From that version on, you can just call fetch() in Node like it's no big deal.

If you're curious, we also have a separate article on web scraping with node-fetch.

Fetch is Promise-based, plays perfectly with async/await, and keeps your code clean instead of drowning you in callbacks or chunk-handling. I love the Fetch API and all my homies do.

Example:

async function fetch_demo() {

const resp = await fetch('https://www.reddit.com/r/programming.json', {

headers: { 'User-Agent': 'MyCustomAgentString' },

});

console.log(JSON.stringify(await resp.json(), null, 2));

}

await fetch_demo();

So, just call fetch(), wait for the response, call .json() on it, and you get parsed JSON with zero effort. No HTTP/HTTPS split, no chunk stitching, no unnecessary boilerplate.

If you need something more custom, fetch also accepts an options object where you can tweak the method, headers, authentication, or anything else you'd expect from a real HTTP client.

3. Axios

Axios is another popular HTTP client that feels pretty close to Fetch. It's Promise-based, simple to use, and it works in both browsers and Node.js. TypeScript fans especially like it because it ships with solid type definitions out of the box.

The downside? Unlike Fetch, you actually have to install it:

npm install axios

Here's a basic Promise-style example:

import axios from 'axios';

async function getForum() {

try {

const response = await axios.get(

'https://www.reddit.com/r/programming.json'

);

console.log(response.data);

} catch (error) {

console.error(error);

}

}

await getForum();

Axios is still a solid pick for many Node.js web scraper setups, especially if you want nice defaults and built-in transforms. But with modern Node.js having native fetch(), a lot of devs prefer staying dependency-free unless they really need Axios' features.

4. Ky

If Fetch is your chill built-in option and Axios is the classic all-rounder, Ky is the lightweight modern kid that showed up and said: "hey, what if we just made fetch nicer?"

Ky is built on top of the Fetch API, but with a much cleaner developer experience. It's tiny, ESM-only, and pleasant to use. People like Ky because it has tiny footprint, provides elegant API layered on top of fetch, has automatic JSON handling, and offers retries built in.

Install it:

npm install ky

Example usage in a simple Node.js web scraper:

import ky from 'ky';

const URL = 'https://news.ycombinator.com';

async function scrape() {

try {

const html = await ky(URL).text();

console.log(html.slice(0, 300)); // peek at the first 300 chars

} catch (err) {

console.error('Ky failed:', err.message);

}

}

await scrape();

Since Ky sits directly on top of the Fetch API, it feels very natural if you already know fetch, just with fewer footguns. And for learning web scraping with Node.js, it's honestly one of the cleanest ways to send requests without pulling in a huge dependency.

5. Node Crawler

The HTTP clients we've talked about so far just send requests and give you back responses. They don't care what you do with the HTML after. For simple web scraping in Node.js that's fine: you grab the page, pass it to Cheerio, do your thing. But sometimes you want more structure: crawling multiple pages, keeping a queue, respecting rate limits, reusing one "handler" across many URLs, etc. That's where something like Crawler comes in.

You can think of it as a small scraping framework for Node.js, a bit like Scrapy for Python, but on the JavaScript side.

Install it with npm:

npm install crawler

Here's a quick example that starts from the ScrapingBee blog index and then crawls each article page to pull some basic info:

import Crawler from 'crawler';

const BLOG_URL = 'https://www.scrapingbee.com/blog/';

const crawler = new Crawler({

maxConnections: 1,

callback: (error, res, done) => {

if (error) {

console.error(error);

done();

return;

}

const { url, $ } = res;

if (url === BLOG_URL) {

// On the main blog page: collect all article links and add them to the queue

const links = $('a[href^="/blog/"]');

links.each((_i, el) => {

const href = el.attribs.href;

if (!href) return;

crawler.add(`https://www.scrapingbee.com${href}`);

});

} else {

// On an individual blog page: extract some data

const title = $('title').text().trim();

const readTime = $('#content span.text-blue-200').text().trim();

console.log({ title, readTime });

}

done();

},

});

// Kick off the crawl with the blog index page

crawler.add(BLOG_URL);

What's happening here:

- We configure a single

callbackthat runs for every crawled page. - If the current URL is the blog index, we grab all article URLs and push them into the queue with

crawler.add. - If it's a blog post URL, we scrape the title and estimated reading time and log them.

- The crawler keeps going until the queue is empty.

Here's the scraping result:

{

title: 'Web Scraping without getting blocked (2026 Solutions) | ScrapingBee',

readTime: '(updated)28 min read'

}

{

title: 'Python Web Scraping: Full Tutorial With Examples (2026) | ScrapingBee',

readTime: '(updated)41 min read'

}

...

For small projects, fetch and Cheerio is usually enough. But once your JavaScript web scraping starts to look more like "crawl this whole section of the site and follow links," a framework like Crawler can save you from hand-rolling a queue, rate limiting, and all that extra plumbing.

6. SuperAgent

SuperAgent is another HTTP client you might bump into while doing web scraping in Node.js and JavaScript. It's been around for a long time, supports Promises and async/await, and has a pretty clean API. These days it's not as popular as Axios or just using fetch, but it's still a decent tool, especially if you like its plugin system.

Install it with:

npm install superagent

Here's what a simple request looks like with modern syntax:

import superagent from 'superagent';

const FORUM_URL = 'https://www.reddit.com/r/programming.json';

async function getForum() {

try {

const response = await superagent

.get(FORUM_URL)

.set('User-Agent', 'my-nodejs-web-scraper/1.0');

console.log(response.body);

} catch (error) {

console.error('Request failed:', error.message);

}

}

await getForum();

Pretty straightforward: call .get(), optionally set headers, await the response, and use response.body to access the parsed JSON.

SuperAgent plugins

One thing that makes SuperAgent a bit special is its plugin system. It supports a bunch of plugins that let you tweak requests and responses without rewriting your own middleware every time.

For example, the superagent-throttle plugin can help you limit how many requests you fire per second which is handy when your Node.js web scraper needs to be polite and not DDoS a site by accident.

In practice, most people will reach for fetch, Axios, or Ky first. But if you like the way SuperAgent feels and you want some of its plugin-based features, it's still a perfectly valid choice in your scraping toolbox.

Comparison of the different libraries

| Library | ✔️ Pros | ❌ Cons |

|---|---|---|

| HTTP package | Supported out-of-the-box | Callback-heavy Separate modules for HTTP/HTTPS Very verbose |

| Fetch | Built-in Promise-based, great with await | Limited advanced config No retries/backoff by default |

| Axios | Nice defaults Great TypeScript support | Extra dependency Heavier bundle |

| Ky | Lightweight modern wrapper over fetch Retries + hooks + clean API | ESM-only Smaller ecosystem |

| Crawler | Full crawling framework Queue, rate-limit, HTML parsing | Less flexible Overkill for simple scrapers |

| SuperAgent | Plugin system Flexible request pipeline | Extra dependency Less popular nowadays |

Data extraction in JavaScript

Fetching a page is only the opening act. The real fun in web scraping in Node.js and JavaScript starts when you actually dig into the HTML and pull out the data you care about. So let's talk about how to handle the HTML you download and how to select the pieces you want.

And yeah... we'll start with regular expressions, because everyone uses them at least once. 😅

Regular expressions: The hard way

The absolute bare-bones way to "scrape" something is to pull the HTML as a string and run regexes on it. No dependencies, no DOM, no fancy libraries, just raw text matching.

Sounds simple enough, but here's the catch: regex and HTML mix about as well as oil and holy water. HTML is structured, nested, weirdly formatted, sometimes broken, and regex is just not built for that. You can use it for tiny, clean snippets, but anything more than that becomes pain and suffering.

But hey, let's still show a quick example so you see what it looks like:

const htmlString = '<label>Username: John Doe</label>';

const result = htmlString.match(/<label>Username: (.+)<\/label>/);

console.log(result[1]);

// John Doe

Here we use String.match(), which returns an array where the capturing group (.+) lands in result[1].

This works: for exactly one label. Add multiple labels, nested elements, line breaks, weird spacing, optional attributes, and suddenly your "simple" regex turns into a 12-armed creature. So, regular expressions are an amazing tool, just not for parsing HTML. So instead of fighting the universe, let's move on to the fun stuff: CSS selectors and the DOM, where scraping actually becomes pleasant.

Cheerio: jQuery-style DOM traversal for scraping

When you're doing web scraping in Node.js and JavaScript, you usually don't want to parse HTML manually. That's where Cheerio shines. It's lightweight, fast, and gives you a familiar jQuery-like API for selecting and traversing elements on the server. If you've ever written $('.my-class') in jQuery, you already know 90% of Cheerio.

What Cheerio is (and isn't)

Cheerio parses HTML into a DOM tree you can navigate with jQuery-style selectors. It's perfect for:

- scraping static HTML

- grabbing text, attributes, links, etc.

- walking the DOM with selectors

- chaining operations like

.text(),.attr(),.find(), etc.

But keep in mind:

- It does not run JavaScript

- It does not emulate a browser

- It cannot execute AJAX, events, clicks, etc.

If the site is JavaScript-heavy, you'll likely need a headless browser. But for static pages Cheerio is fast, clean, and honestly a joy to use.

Cheerio basics

import * as cheerio from 'cheerio';

const $ = cheerio.load('<h2 class="title">Hello world</h2>');

$('h2.title').text('Hello there!');

$('h2').addClass('welcome');

console.log($.html());

// <html><head></head><body><h2 class="title welcome">Hello there!</h2></body></html>

This is pure jQuery energy on the server.

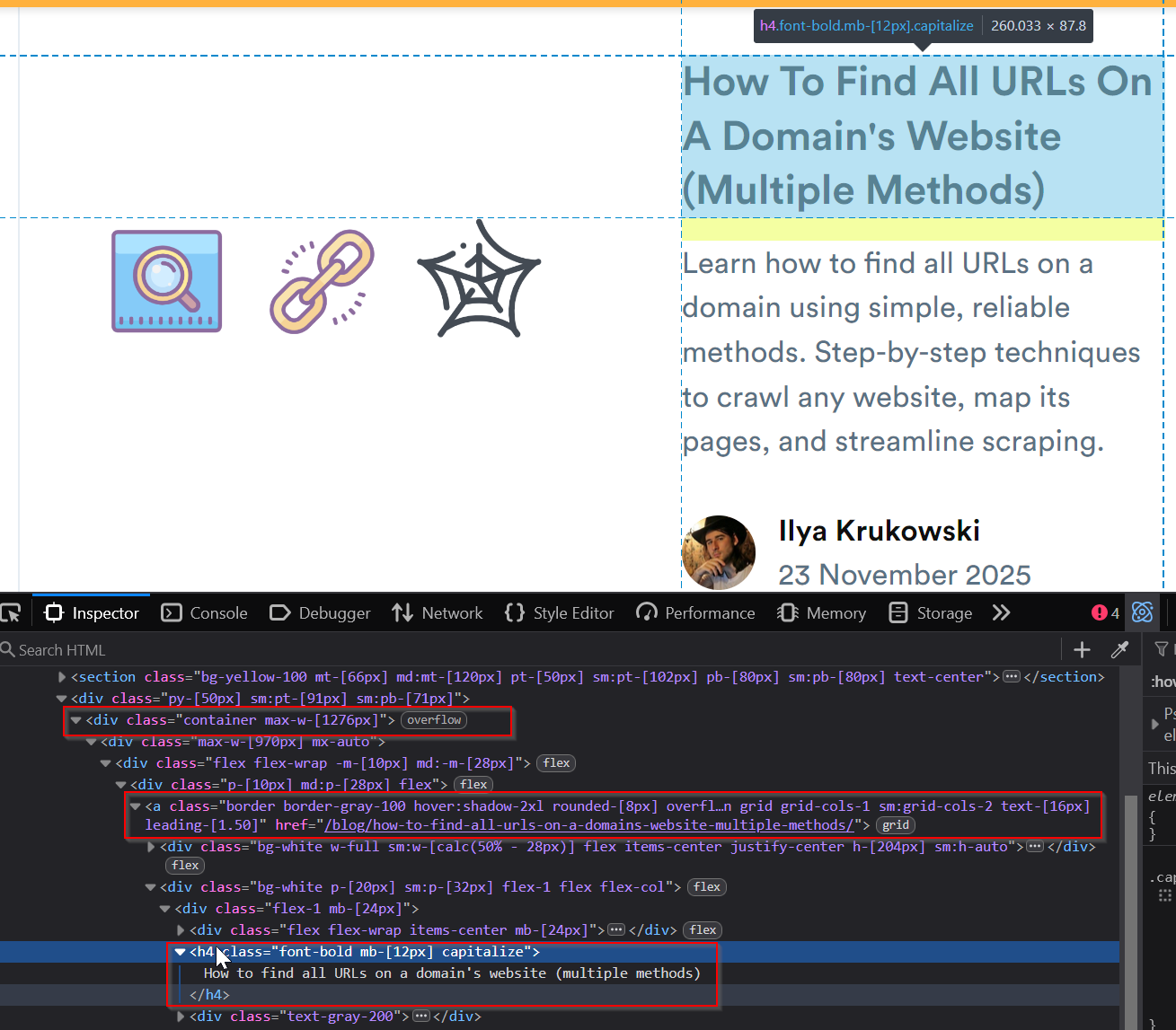

Crawling a real page with Cheerio

Let's do a real example: scrape the ScrapingBee blog and grab the names of all posts. We'll use fetch, because lightweight gang for life.

Install Cheerio if you haven't already:

npm install cheerio

Create or update the crawler.js file:

import * as cheerio from 'cheerio';

const BLOG_URL = 'https://www.scrapingbee.com/blog/';

async function getPostTitles() {

try {

const resp = await fetch(BLOG_URL);

const html = await resp.text();

const $ = cheerio.load(html);

const titles = [];

$('#content .container a.border.border-gray-100[href^="/blog/"] h4').each((_i, el) => {

const title = $(el).text().trim();

if (title) titles.push(title);

});

return titles;

} catch (err) {

console.error('Scraping failed:', err.message);

return [];

}

}

const titles = await getPostTitles();

console.log(titles);

We use a relatively involved selector here because the h4 headings are pretty hard to catch, just like magical beasts.

Therefore I always recommend using the Inspector tool built in all modern browsers to understand how the page markup looks. Unfortunately, it might change in the future but there's not much we can do about it.

Cheerio takes the HTML from the blog page and loads it into $. From there you can:

- inspect elements

- use CSS selectors

- extract text or attributes

- loop through results with

.each()

It's basically "jQuery without the browser."

Sample output

Run the script:

node scrape.js

You'll see something like this:

[

"How to find all URLs on a domain's website (multiple methods)",

'The best Python HTTP clients for web scraping',

'How to download an image with Python?',

'How to send a POST with Python Requests?',

'How to use a proxy with HttpClient in C#'

]

Simple and nice for static sites.

If you're also using proxies, check out our guide on the Node.js Axios proxy.

jsdom: The DOM for Node

While Cheerio gives you jQuery-like scraping, jsdom goes one step further: it recreates the browser's actual DOM API inside Node.js. So instead of just parsing HTML, you get:

- real

documentandwindow - real

querySelector(),classList,getElementById() - the ability to run the page's JavaScript

This makes jsdom feel like a mini browser engine without being a full browser like Playwright or Puppeteer.

What jsdom does well

- parses HTML into a DOM tree

- lets you use native browser DOM APIs

- can optionally run

<script>tags and inline JS - can load external scripts if you enable it

- lets you "interact" with elements (

click(), modify attributes, etc.)

But remember: it's not a full browser. No rendering engine, no CSS layout, no network stack like Chrome. It's still lightweight and great for many scraping use cases.

Basic example

Install jsdom by running:

npm install jsdom

Here's the simple DOM manipulation example:

import { JSDOM } from 'jsdom';

const dom = new JSDOM('<h2 class="title">Hello world</h2>');

const document = dom.window.document;

const heading = document.querySelector('.title');

heading.textContent = 'Hello there!';

heading.classList.add('welcome');

console.log(heading.outerHTML);

// <h2 class="title welcome">Hello there!</h2>

Feels just like writing browser code, except it's all running in Node.js.

Running embedded JavaScript (the spicy part)

Where jsdom stands out is its ability to execute the page's scripts. Let's simulate clicking a button that injects a <div> into the page:

import { JSDOM } from 'jsdom';

const HTML = `

<html>

<body>

<button onclick="const e = document.createElement('div'); e.id = 'myid'; this.parentNode.appendChild(e);">Click me</button>

</body>

</html>

`;

const dom = new JSDOM(HTML, {

runScripts: 'dangerously',

resources: 'usable'

});

const document = dom.window.document;

const button = document.querySelector('button');

console.log('Element before click:', document.querySelector('#myid'));

button.click();

console.log('Element after click:', document.querySelector('#myid'));

Key points:

HTMLis just a small test page with a button whoseonclickcreates a new<div id="myid">.- We create a jsdom instance and enable script execution with

runScripts: "dangerously"so the inline JS can actually run. documentworks just like in a browser, so we grab the<button>withquerySelector.- Before clicking,

#myiddoesn't exist, so we lognull. - When we call

button.click(), jsdom executes the inline JavaScript, which creates the<div>. - After the click, querying

#myidreturns the new element proving the script executed inside jsdom.

Output:

Element before click: null

Element after click: HTMLDivElement {}

The button's inline JavaScript executed exactly like it would in a browser.

What makes this work?

Two important options:

runScripts— allows executing<script>tags or inline JSresources— allows loading external scripts

The jsdom documentation warns that with runScripts: "dangerously", a malicious site could break out of the sandbox and mess with your machine. So, proceed with caution.

When to use jsdom (and when not to)

jsdom is excellent when:

- the site is mostly static

- you need browser-like DOM APIs

- you want to execute simple JavaScript on the page

- you don't need a real browser's rendering engine

But it still falls short for:

- websites with heavy frontend frameworks (React, Angular, Vue)

- complex SPA navigation

- pages requiring full browser features or Web APIs

- anything involving real user interactions, cookies, logins, captchas, etc.

For that, you'll want a headless browser.

💡 New cool thing: you can now extract data from HTML with a simple API call. Check out the docs.

Example: Scraping a real website with Node.js

Time to glue everything together and build a real web scraper script, not just toy snippets. We'll use Node.js scraping with fetch and Cheerio to pull quotes from quotes.toscrape.com and output clean JSON.

Goal: for each quote on the first page, grab:

- the quote text

- the author

- the list of tags

Then print it as structured JSON.

1. What we'll use

For this example of Node.js web scraping we'll stick to a minimal stack:

fetch– built into Node.js 18+cheerio– to parse HTML and query it with CSS selectors

Install Cheerio:

npm install cheerio

Make sure you have the following line in your package.json:

{

"type": "module"

}

2. The scraper code

Create a file called scrape-quotes.js and drop this in:

import * as cheerio from 'cheerio';

const URL = 'https://quotes.toscrape.com/';

async function scrapeQuotes() {

const res = await fetch(URL);

if (!res.ok) {

throw new Error(`Request failed with status ${res.status}`);

}

const html = await res.text();

const $ = cheerio.load(html);

const quotes = [];

$('.quote').each((_i, el) => {

const text = $(el).find('.text').text().trim();

const author = $(el).find('.author').text().trim();

const tags = [];

$(el)

.find('.tags .tag')

.each((_j, tagEl) => {

tags.push($(tagEl).text().trim());

});

quotes.push({ text, author, tags });

});

return quotes;

}

async function main() {

try {

const quotes = await scrapeQuotes();

console.log(JSON.stringify(quotes, null, 2));

} catch (err) {

console.error('Scraping failed:', err.message);

process.exit(1);

}

}

await main();

What this script does, step by step:

- fetches the page HTML with

fetch(URL) - loads it into Cheerio with

cheerio.load(html) - loops over every

.quoteblock - pulls out

.text,.author, and.tagelements - builds a plain JS object for each quote

- prints the whole array as JSON

Classic Node.js scraping pattern: fetch → parse → extract → output.

3. Running the scraper

Run it with:

node scrape-quotes.js

You should see something like:

[

{

"text": "The world as we have created it is a process of our thinking. It cannot be changed without changing our thinking.",

"author": "Albert Einstein",

"tags": [

"change",

"deep-thoughts",

"thinking",

"world"

]

},

{

"text": "It is our choices, Harry, that show what we truly are, far more than our abilities.",

"author": "J.K. Rowling",

"tags": [

"abilities",

"choices"

]

}

]

Now you've got a real Node.js web scraping script: small, readable, and easy to extend. From here you can:

- paginate through more pages

- save the JSON to a file or database

- hook this into an API or a cron job

All with the same basic pattern and tools you just used.

The challenges of web scraping at scale

Scraping a single page with Node.js is very easy. But scraping thousands or millions of pages reliably? That's where the real boss fight begins. Once you scale, websites stop being friendly and start activating defenses. Your cute little Node.js web scraping script suddenly needs to deal with real-world infrastructure problems.

Let's break down the usual pain points.

1. IP blocking

If too many requests come from the same IP, websites eventually say "nah bro, you're clearly a bot," and block you.

At scale you probably need rotating proxies, IP diversity, and smart request scheduling. Doing that manually is a nightmare.

2. Bot detection

Modern sites don't just count requests. They inspect everything: headers, cookies, TLS fingerprints, timing patterns, JavaScript execution, and mouse/scroll simulations.

A plain "fetch with Cheerio" script won't pass for a human for long.

3. CAPTCHAs

reCAPTCHA, hCaptcha, Turnstile... Once these guys appear, your scraper is effectively dead unless you build or buy a CAPTCHA-solving pipeline which is slow, expensive, and annoying to maintain.

4. JavaScript-heavy and dynamic sites

Many websites don't show content until JavaScript runs. SPA frameworks require executing JS, rendering components, handling dynamic requests, scrolling / clicking / waiting for the DOM to settle.

This pushes you toward headless browsers which are great, but slow and hard to scale.

Enter ScrapingBee: The sane way to scale scraping

When you're dealing with rotating proxies, bot detection, CAPTCHAs, dynamic rendering, and browser automation, you can spend weeks building your own scraping infrastructure. Or you can use a dedicated web scraping API that already handles all of these headaches.

ScrapingBee is designed for exactly this:

- full-browser rendering when needed

- smart proxy rotation

- JS execution

- anti-bot avoidance

- simple API that works with any JavaScript setup

- perfect companion for dynamic web scraping Node.js workflows

Scraping small is easy. Scraping big requires actual infrastructure. ScrapingBee gives you that infrastructure so you don't have to build it yourself.

ScrapingBee API

ScrapingBee provides a web scraping API designed to handle all the ugly parts of scraping so your Node.js code can stay clean and simple.

ScrapingBee gives you:

- premium rotating proxies

- browser rendering (full Playwright under the hood)

- automatic CAPTCHA avoidance

- built-in HTML extraction

- and even an AI scraping mode where you just describe what you want

No headless browsers to manage. No proxy lists. No sleepless nights debugging 403s.

Getting started

First, register at ScrapingBee to grab a free API key with 1000 credits.

Install the Node.js client:

npm install scrapingbee

Extracting structured data with CSS selectors

Let's see a basic example to get structured data from a URL using CSS selectors:

import { ScrapingBeeClient } from 'scrapingbee';

const client = new ScrapingBeeClient(process.env.SB_KEY);

async function getBlog(url) {

const response = await client.get({

url,

params: {

extract_rules: {

title: 'h1',

author: '#content div.avatar + strong'

}

}

});

const decoder = new TextDecoder();

console.log(decoder.decode(response.data));

}

await getBlog('https://www.scrapingbee.com/blog/best-javascript-web-scraping-libraries/');

Make sure you add your ScrapingBee API key as the environment variable.

Here's the result:

{"title": "The Best JavaScript Web Scraping Libraries", "author": "Ilya Krukowski"}

ScrapingBee fetches the page, applies the CSS selectors on the server side, and returns clean JSON.

AI web scraping example

ScrapingBee also has an AI extraction mode. Don't specify selectors — just ask for what you want.

import { ScrapingBeeClient } from 'scrapingbee';

const client = new ScrapingBeeClient(process.env.SB_KEY);

async function summarizeBlog(url) {

const response = await client.get({

url,

params: {

ai_query: 'summary of the blog',

ai_selector: '#content'

}

});

const decoder = new TextDecoder();

console.log(decoder.decode(response.data));

}

await summarizeBlog('https://www.scrapingbee.com/blog/best-javascript-web-scraping-libraries/');

So, here are the key points:

- Inside

summarizeBlog, we callclient.get()and pass:url— the page we want to scrape.ai_query— what we want the AI to produce (a summary).ai_selector— which part of the page the AI should focus on.

- ScrapingBee fetches the page, executes JS if needed, and extracts the HTML inside

#content. - The AI reads that section and generates a summary for us.

- The API returns raw bytes, so we decode them with

TextDecoder.

Example output:

This blog post discusses the best JavaScript web scraping libraries available in 2026. It covers a range of tools from lightweight HTML parsers like Cheerio and htmlparser2 to full browser automation tools like Playwright and Puppeteer, and even SaaS solutions like ScrapingBee. For each library, it provides an overview, a quick start code snippet, and lists its pros and cons. The post emphasizes choosing the right tool based on whether the target website is static or dynamic and whether complex tasks like logins or JavaScript rendering are involved. It also mentions solutions for bypassing bot detection and concludes by summarizing the key considerations for web scraping with JavaScript.

How cool is that? The future is already here.

Headless browsers in JavaScript

Modern websites are way more complicated than they used to be. A lot of them don't send you usable HTML right away; instead, they rely on JavaScript frameworks, dynamic rendering, API calls, infinite scrolling, and all kinds of async behavior.

At some point, a simple "fetch-and-parse" scraper just isn't enough anymore. You need something that can actually run the page like a real user's browser. That's where headless browsers come in.

Headless browsers let your scraper:

- execute JavaScript

- wait for dynamic content

- click buttons, scroll, and trigger interactions

- load data from background API calls

- render full SPAs (React, Vue, Angular, you name it)

So whenever you hit a website that looks empty when you fetch() it, but magically shows content in a real browser, a headless browser is the tool that bridges that gap.

Let's take a look at how they help you scrape JavaScript-heavy sites and SPAs without going insane.

1. Puppeteer: the headless browser

Puppeteer is basically remote control for Chrome. It lets you spin up a real browser (headless by default), open pages, click stuff, type into forms, grab screenshots, and generate PDFs.

Compared to simple HTTP requests, Puppeteer lets you:

- load full SPAs (React / Vue / Angular)

- wait for JS-rendered content

- screenshot or PDF any page

- automate user flows (login, search, checkout, etc.)

So when basic Node.js web scraping with fetch and Cheerio stops working because "the page is empty without JS", Puppeteer is usually the next step.

Installing Puppeteer

Install it with:

npm install puppeteer

By default, this downloads a bundled Chromium (~180–300 MB depending on your OS). You can skip the download and point it at an existing Chrome/Chromium using env vars like PUPPETEER_SKIP_CHROMIUM_DOWNLOAD, but the recommended path is to use the bundled version — that's what Puppeteer is tested against.

Example: Screenshot and PDF of a page

Let's grab a screenshot and a PDF of the ScrapingBee blog.

Create or update crawler.js:

import puppeteer from 'puppeteer';

const URL = 'https://www.scrapingbee.com/blog';

async function getVisual() {

let browser;

try {

browser = await puppeteer.launch({

headless: true // on by default

});

const page = await browser.newPage();

await page.goto(URL, {

waitUntil: 'networkidle2'

});

await page.screenshot({ path: 'screenshot.png', fullPage: true });

await page.pdf({ path: 'page.pdf', format: 'A4' });

console.log('Saved screenshot.png and page.pdf');

} catch (err) {

console.error('Puppeteer failed:', err.message);

} finally {

if (browser) {

await browser.close();

}

}

}

await getVisual();

What's happening here:

puppeteer.launch()starts a headless browser instance.browser.newPage()opens a fresh tab.page.goto(URL, { waitUntil: 'networkidle2' })navigates to the URL and waits until the page has mostly finished loading (including async requests).page.screenshot()captures the full page as a PNG.page.pdf()exports the page as a PDF.browser.close()shuts everything down cleanly.

This is already a legit web scraper JavaScript setup for visual checks. You can easily adapt it to:

- wait for specific selectors (e.g.

await page.waitForSelector('.product')) - extract text with

page.evaluate() - log in, paginate, and scrape behind auth

Just remember: when goto() resolves, the page might still be doing some lazy loading. For production-grade Node.js scraping, you'll often wait for specific elements instead of only relying on networkidle2.

If you want to dive deeper into Puppeteer, check out these guides:

- How to download a file with Puppeteer

- Handling and submitting HTML forms with Puppeteer

- Using Puppeteer with Python and Pyppeteer

2. Playwright: The modern headless browser framework

Playwright is Microsoft's answer to Puppeteer, but with a bigger toolbox. It supports Chromium, Firefox, and WebKit, works across Windows, macOS, and Linux, and has official bindings for Node.js, Python, Java, and .NET.

If Puppeteer feels like "control Chrome," Playwright feels like "control the entire browser world." Why people love Playwright for Node.js scraping:

- works with all major browsers

- powerful auto-waiting (it waits for elements intelligently)

- built-in handling for frames, downloads, dialogs, etc.

- excellent reliability compared to older tools

- easy to set up and script

- great for dynamic JS-heavy sites

Installing Playwright

You install the library and the browser binaries like this:

npm install playwright

This installs Chromium, Firefox, and WebKit; Playwright can use any of them.

If you only want Chromium:

npx playwright install chromium

Basic example: Loading a page

Here's a Playwright example script:

import { chromium } from 'playwright';

async function main() {

// Launch Chromium (full UI off by default)

const browser = await chromium.launch({

headless: true // set to false if you want to see the browser window

});

const page = await browser.newPage();

await page.goto('https://finance.yahoo.com/world-indices', {

waitUntil: 'domcontentloaded'

});

// wait a moment for JS-rendered content

await page.waitForTimeout(3000);

await browser.close();

}

await main();

Key points:

chromium.launch()– starts a browser engine. Setheadless: falseif you want to watch the browser in action.browser.newPage()– opens a new tab.page.goto(url)– navigates to a page just like in a real browser. You can choose how long to wait:loaddomcontentloadednetworkidle

page.waitForTimeout(ms)– quick-and-dirty pause. For production scraping, you'd usually wait for specific elements with:await page.waitForSelector('.some-js-element').browser.close()– shuts down everything.

When to use Playwright

Playwright is ideal when you need:

- dynamic web scraping in Node.js

- heavy JavaScript rendering

- SPA crawling

- login flows

- clicking buttons, filling forms, scrolling

- multiple browser engines for testing or evasion

If you want a deeper dive, check out our full tutorial: Playwright web scraping guide

Comparison of headless browser libraries

| Library | ✔️ Pros | ❌ Cons |

|---|---|---|

| Puppeteer | Battle-tested Huge ecosystem Great for Chrome-focused scraping | Chrome/Chromium only Heavier to scale |

| Playwright | Cross-browser (Chromium, Firefox, WebKit) Smarter auto-waiting More reliable for modern sites | Newer ecosystem Slightly heavier install |

🤖 Curious how headless browsers hold up against fingerprinting detection? Check out our deep dive: How to bypass CreepJS and spoof browser fingerprinting.

JavaScript vs. Python for web scraping

If you hang around scraping circles long enough, you'll notice one big truth: most people either scrape with Python or scrape with JavaScript, and both camps have very good reasons.

Since this article is all about web scraping in JavaScript and web scraping in Node.js, it's worth taking a moment to compare the two ecosystems and when you'd reach for which.

Where Python shines

Python has been the "default scraping language" for a long time. Why?

- Scrapy – the king of large-scale crawlers, queues, pipelines, spiders

- BeautifulSoup & lxml – simple and powerful HTML parsing

- Requests – clean HTTP client

- Massive data ecosystem (Pandas, NumPy, Jupyter, etc.)

- Tons of learning resources — especially for traditional scraping workflows

Python is especially strong when your work includes:

- heavy data cleaning

- crawling entire sites at scale

- exporting structured datasets

- analysis and reporting

If you want a primer on Python's approach, check our full guide.

Where JavaScript and Node.js shines

Web scraping with JavaScript brings its own big advantages, especially today:

- Async by default – perfect for fast concurrent HTTP requests

- fetch built in – lightweight and modern

- Cheerio & JSDOM – great for static parsing

- Puppeteer / Playwright – arguably the best headless browser tools in any language

- Ideal for JS-heavy sites – because you're running the same language the site was written in

- One language across your stack – frontend, backend, and scraping

If you're already building apps in JS, doing your scraping in Node.js just keeps your world simpler. For dynamic sites? JavaScript often has the best tooling of all.

So which one is "better"?

The honest answer: neither wins as they're good at different things.

- Python — a mature scraping and data ecosystem.

- JavaScript — best tooling for modern, JavaScript-rendered websites.

Most serious teams actually end up using both depending on the project.

ScrapingBee bridges both worlds

No matter which language you prefer, ScrapingBee works the same.

Whether you're doing:

- web scraping in JavaScript

- web scraping with JavaScript in Node.js

- or scraping with Python

ScrapingBee gives you: full browser rendering, proxy rotation, anti-bot avoidance, AI extraction, and a single API that works in any language. So you can pick the language you enjoy, not the one forced on you by anti-bot systems.

Ready to scrape smarter with Node.js?

By now you've seen everything from basic fetch scraping to full headless browsers, and you've probably noticed one thing: Scraping gets messy fast.

Proxies, CAPTCHAs, dynamic rendering, rate limits, anti-bot systems... a simple Node.js web scraper can suddenly turn into a full-time job. If you want to skip the headaches and just get reliable data, a web scraping API for Node.js is the fastest way forward.

That's exactly what ScrapingBee is built for.

- No proxy management

- No browser clusters

- No fingerprinting issues

- No fighting CAPTCHAs

- Just clean HTML or structured data, every time

Whether you're scraping 10 pages or 10 million, ScrapingBee handles the hard parts so you don't have to. If you're ready to make your Node.js scraping setup smoother, faster, and way less painful...

👉 Get started now — free trial included, no nonsense.

Build smarter scrapers. Not bigger headaches.

Summary

That was a big journey, but hopefully a useful one. You've now seen the main tools and techniques you can use for web scraping with JavaScript and Node.js — from simple fetch requests all the way up to headless browsers.

Here's the quick recap:

- ✅ Node.js is a server-side JavaScript runtime with a non-blocking, event-driven architecture powered by the Event Loop.

- ✅ HTTP clients — built-in fetch, native HTTP modules, Axios, SuperAgent, node-fetch, and others — let you send requests and receive responses from any server.

- ✅ Cheerio gives you a jQuery-style API for parsing and extracting data from HTML, but does not run JavaScript.

- ✅ JSDOM builds a real DOM environment from HTML so you can manipulate it using standard browser APIs.

- ✅ Puppeteer and Playwright act as high-level browser automation tools, letting you interact with pages just like a real user — perfect for JS-heavy or dynamic websites.

This article focused on the tools available in the JavaScript scraping ecosystem. There are, of course, more challenges out in the wild — especially around detection and anti-bot measures. If you want to learn how to stay undetected, we have a dedicated guide on how not to get blocked as a crawler. Definitely worth a read.

💡 And if you love scraping but don't have the time (or sanity) to maintain your own crawler infrastructure, check out our scraping API platform. ScrapingBee handles all the hard parts for you so you can focus on the data, not the defenses.

Web scraping with Node.js FAQs

Is web scraping with JavaScript legal?

Generally yes. Scraping public data is legal in many jurisdictions if you respect copyright, robots.txt, and don't overload servers.

Laws differ by country, so check local regulations. When in doubt, consult a lawyer or review sources like the hiQ vs. LinkedIn ruling.

What's the best library for web scraping in Node.js?

For static sites, fetch + Cheerio is lightweight and fast. For dynamic or JavaScript-heavy sites, Playwright or Puppeteer is the better choice. Each tool fits different use cases, not a single "best for everything."

How do I scrape dynamic websites with JavaScript?

Use a headless browser like Playwright or Puppeteer. They execute JavaScript, wait for dynamic elements, and let you interact with the page. Perfect for SPAs or sites that load content via API calls after page load.

Should I use Puppeteer or Cheerio?

- Use Cheerio for simple HTML parsing when the page is static.

- Use Puppeteer when the site requires JavaScript execution, login flows, clicks, scrolling, or dynamic rendering.

Both tools complement each other, they are not full replacements.

Can I avoid getting blocked when scraping?

Yes, with techniques like rotating proxies, realistic headers, delays, proper concurrency, browser automation, and avoiding suspicious patterns. For hassle-free scaling, a scraping API like ScrapingBee handles anti-bot systems and CAPTCHAs for you automatically.

Is Python or JavaScript better for web scraping?

- Python excels at large-scale crawling and data processing (Scrapy, BeautifulSoup).

- JavaScript is perfect for handling JS-heavy sites and async workflows.

Neither is "better". Use the one that fits your workflow. ScrapingBee works with both, so you're free to pick.

Resources

Want to dive deeper? Here are some solid places to continue exploring:

- Node.js Website – Official docs, guides, and everything about the Node.js runtime.

- Puppeteer Docs – Google's official Puppeteer documentation and API reference.

- Playwright – A great alternative to Puppeteer, backed by Microsoft.

- Generating Random IPs to Use for Scraping – How to rotate IPs to avoid basic bot detection.

- ScrapingBee's Web Scraping Blog – Tons of tutorials, guides, and deep dives for all platforms.

- Handling Infinite Scroll with Puppeteer – Useful if you deal with endless-feed websites.

- Node-unblocker – A Node.js package to route scraping traffic through proxies.

- A JavaScript Developer's Guide to cURL – If this article clicked for you, this one will too.

Kevin worked in the web scraping industry for 10 years before co-founding ScrapingBee. He is also the author of the Java Web Scraping Handbook.