Making jokes on the internet is a fine art and Reddit users globally are working diligently to keep the dad jokes coming, because the only thing better than winning an internet argument is winning an internet upvote contest with a punchline your dad would be proud of.

In fact, Reddit's vast reservoir of dad jokes may just be the secret ingredient that helped it reach a staggering $6.4 billion valuation at its recent IPO. Who knew that jokes your dad repeats at every family gathering could be worth their weight in Reddit Gold? But which country attempts to make the highest proportion of jokes in their comment sections?

In this article, we used AI to analyze what percentage of comments from each country's subreddit were (attempted) jokes to see which nations are trying their hardest to have a sense of humor.

We used the Reddit API to obtain the year’s top 50 threads from each country’s subreddit. Then, we retrieved the comments for each of these threads and used AI (Mistral 7B LLM) to classify the top-level comments as “joke” or “not joke” in relation to the thread topic. In total, we covered 352,686 comments from 9,969 threads. Finally, we analyzed the classifications for some interesting insights.

By merging data science with a dash of humor analysis, we present a unique leaderboard of global jest, highlighting which countries are earnestly upping their joke game on Reddit.

I’ll discuss the insights first, followed by the methods used, so you can skip the latter if you aren’t into writing code. Below are some examples of comments that were classified as jokes.

Exclusion and Caveats

- Some subreddits we analyzed are not exclusively used by the citizens of the respective country. For example, r/northkorea is meant for the global community to discuss North Korea, while North Korea's own citizens have severely limited internet access to be using Reddit.

- Amongst the UK countries, we analyzed England, Scotland, and Wales separately. We did not analyze Northern Ireland separately as the Ireland subreddit (r/Ireland) claims to be island-wide.

- Northern Cyprus, Transnistria, Abkhazia, Aland, Tokelau, and Cocos Islands were excluded due to insufficient comment data and/or unavailability of GIS map boundaries.

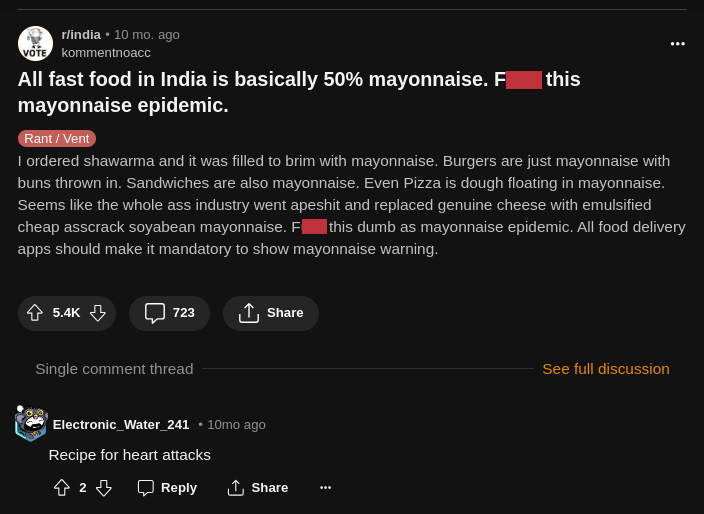

The Most Vocal Countries

As a rough baseline for further discussion, I first ranked the countries based on the average number of comments per analyzed thread. The following are the top 20 countries with the highest average comments per thread.

One trend we can readily note from the above chart is that 8 out of the top 10 countries have a significant English-speaking population - either in the gross number of speakers or as a percentage of the population.

The Most Humorous Countries

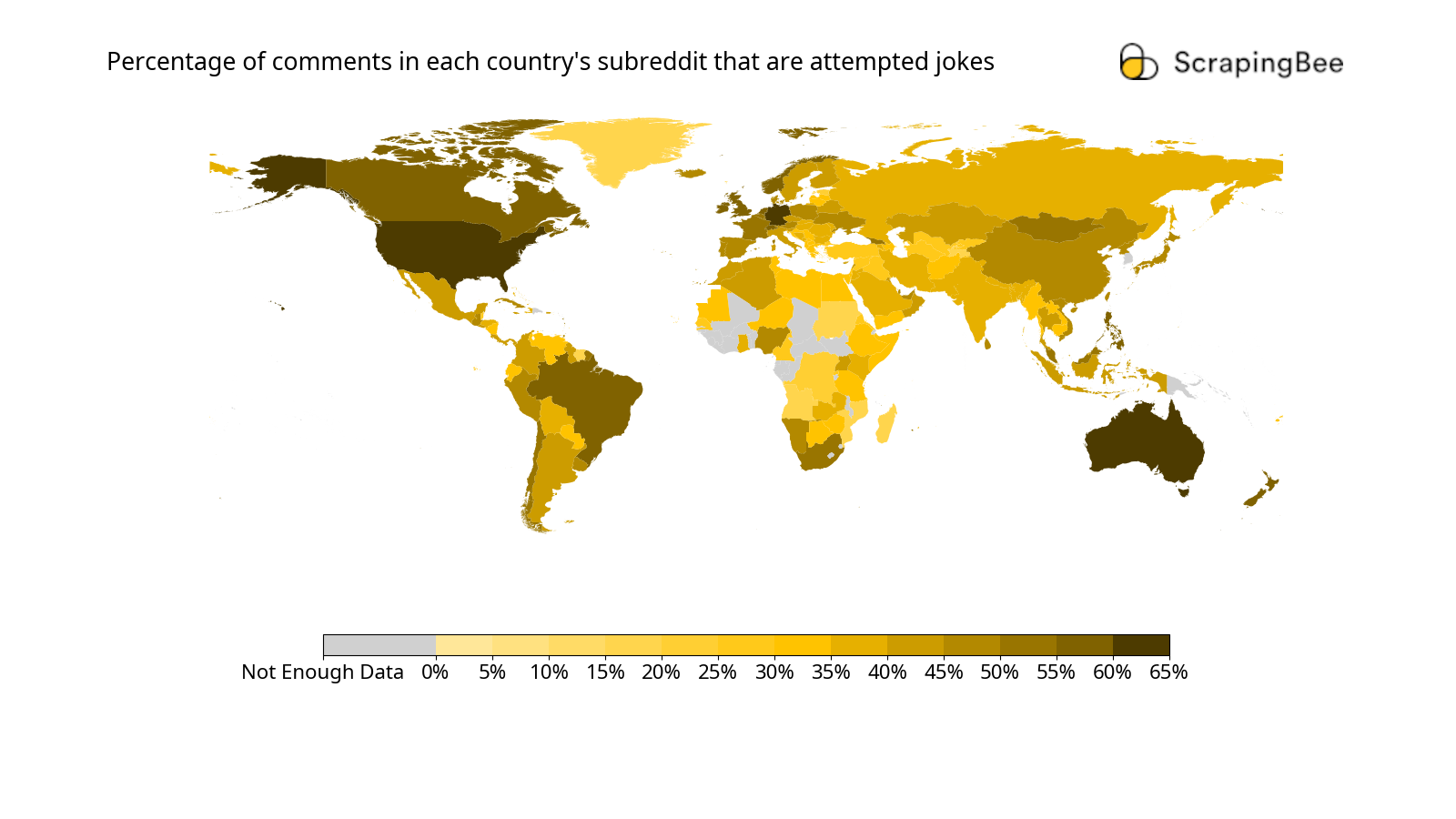

Let’s see the choropleth map with each country color-coded based on the percentage of jokes:

A choropleth map shows statistical data pertaining to geographical areas by color coding each area as per the statistics. The base map with the country borders was obtained from the World Bank’s Official Data Catalog, under the Creative Commons Attribution 4.0 license. The colors were added onto this base map.

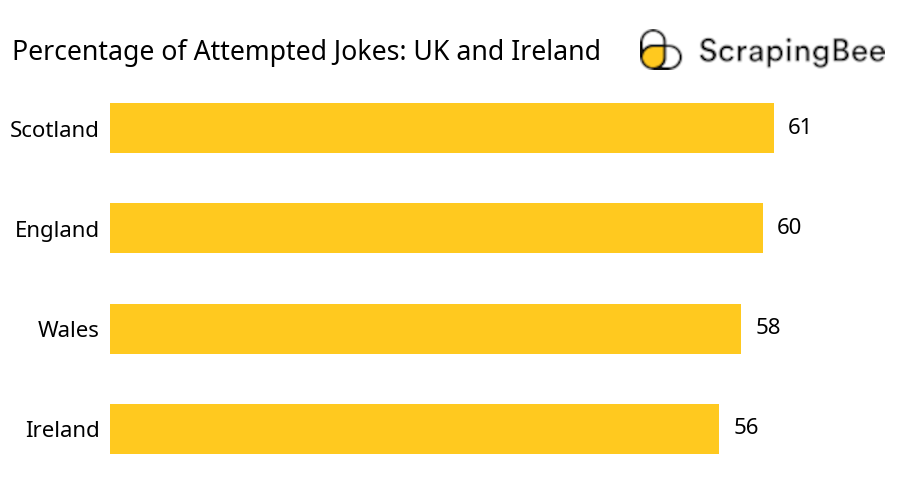

From the above map, we can readily observe that most colors are geographically contiguous, for instance, most of Asia falls in the 35-50% range. This suggests that cultural attributes are somewhat continuous over political borders. To further illustrate this, let's look at the numbers for UK and Ireland:

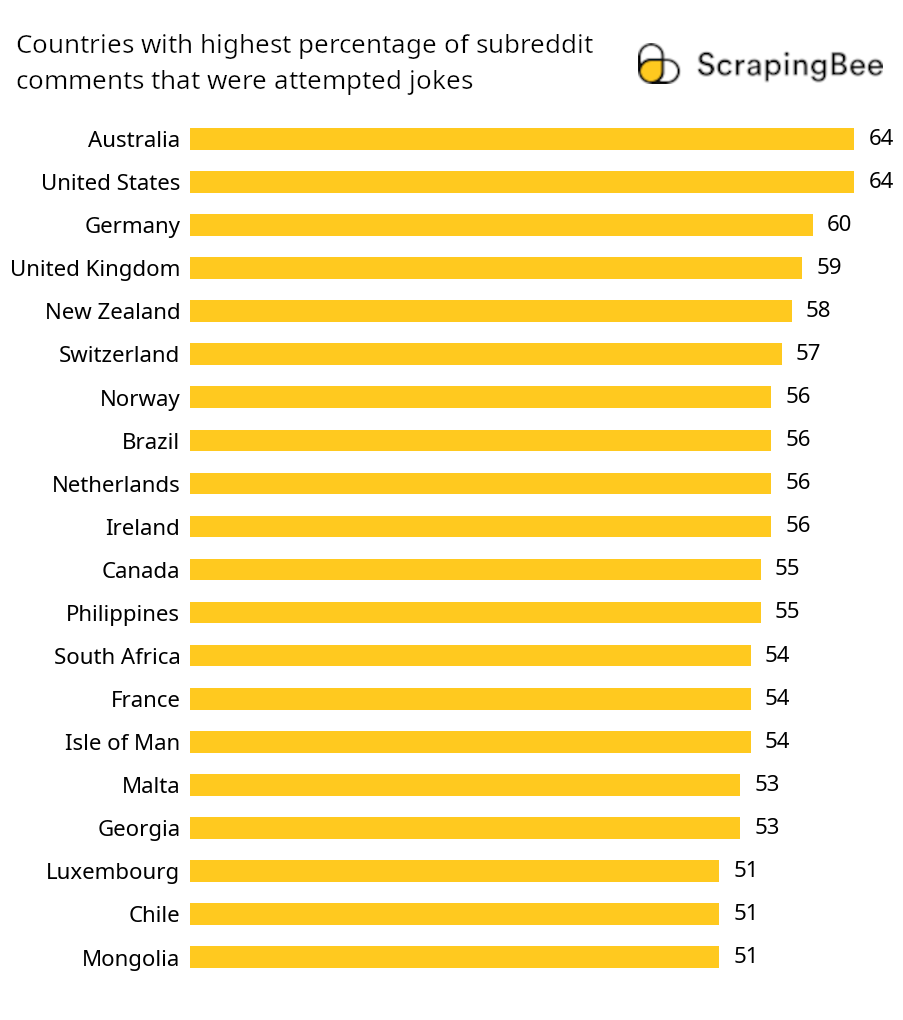

We see that there is no significant difference in the percentage of attempted jokes amongst these countries. Next, let’s also see the top 20 most humorous as a bar chart:

We see that the chart is topped by the United States and Australia. Most countries featured in this chart have a good percentage of English speakers or at least write in a Romanized alphabet. Some countries we saw on the most vocal countries’ list such as India, Russia, China, and Ukraine are absent here. These countries do not write their native languages in the Romanized alphabet. One hypothesis to explain the data here is that they use their first language for expressing humor and reserve English for more formal and serious discussions only. It is also possible that these countries use words from their native language in the Romanized script, which is convenient to type with the regular qwerty keyboard. Hinglish (Romanized Hindi) is a very popular example of this. LLMs may not be trained to completely understand this kind of language and classify these comments as jokes, so we could have missed out on identifying some jokes in these countries.

In case you'd like to see the exact numbers for all the countries, we've got it on a Google Sheet.

Getting Data From Reddit API Using PRAW

I started with a CSV file, country-subs.csv, that had the country names mapped out to their subreddits:

Country Name,Subreddit

England,r/england

Wales,r/Wales

Scotland,r/Scotland

China,r/China

India,r/India

United States,r/MURICA

...

...

Basic Setup

Python was the language of choice for this analysis. I installed 3 additional packages for this part:

- PRAW: Python wrapper for the Reddit API.

- dataset: A simple SQL wrapper for working with SQLite databases. SQLite databases are contained in a single file and are easy to move around between local and cloud environments. This was the ideal choice for the scale of this project.

- python-dotenv: To load API keys and other secrets from the

.envfile.

Scraping was not necessary as Reddit provides us with an API to access its data. My basic setup code looked like this:

import csv # for reading country-subs.csv

import time # for setting delays between successive API calls

import dataset

from dotenv import dotenv_values

import praw

config = dotenv_values(".env")

# PRAW Setup

username = config["REDDIT_USERNAME"]

reddit = praw.Reddit(

client_id=config["REDDIT_CLIENT_ID"],

client_secret=config["REDDIT_CLIENT_SECRET"],

password=config["REDDIT_PASSWORD"],

username=username,

user_agent= f"linux:script.s-hub:v0.0.1 (by /u/{username})",

ratelimit_seconds=600,

)

# user_agent must be a meaningful string as per Reddit's recommendations

# Reddit's API rate limits reset every 10 mins (600 seconds)

# check that the Reddit login worked

print(f"Logged Into Reddit As: {str(reddit.user.me())}")

# This should print my username

# connect to SQLite db

db = dataset.connect("sqlite:///data.db")

# This will create the file if it does not exist

Getting Top Threads by Country

I first loaded the list of countries and their respective subreddits from the country-subs.csv file. Then, for each country, I retrieved the top 50 threads in the year so far. I stored these threads in the database. Though PRAW handles throttling and API rate limiting, it was helpful to add breaks in between. Read more about handling rate limits on our blog.

# load countries and subs from country-subs.csv

countries = []

with open("country-subs.csv") as f:

for country in csv.DictReader(f):

countries.append(country)

# iterate over each country to get the top threads from its sub

for country in countries

time.sleep(1) # delay between API calls

subreddit = country["Subreddit"]

country_name = country["Country Name"]

print(f"\nGetting Top 50 Threads from {subreddit} ({country_name})")

if not subreddit.startswith("r/"):

# some countries may have subreddit marked as #N/A: Not Available

# some countries may have private subreddits, marked as #private

print(f"Unavailable Subreddit: {subreddit}, Skipping")

# stop here and move to next country

continue

# if the subreddit is available, go ahead

subreddit_slug = subreddit[2:] # to remove 'r/' from the start

n_threads = 0 # to keep count of how many threads were obtained

threads = reddit.subreddit(subreddit_slug).top(

time_filter="year",

limit=50,

) # get the top 50 threads for this year

for thread in threads:

row = {

"thread_id": thread.id,

"title": thread.title,

"score": thread.score,

"subreddit": subreddit,

"country_name": country_name,

"processed": False,

}

db["threads"].insert(row)

# creates table 'threads' if it does not exist

# inserts the dict 'row' as a row in the table

# non-existent columns are automatically created

n_threads += 1 # increment counter

print(f"Obtained {n_threads} Threads")

In addition to the relevant data fields about the thread, I also added “processed” as a column. This is generally very helpful for iterating over large datasets. Once I run a task over a row, I mark it as processed. If the program crashes midway and has to be restarted, I can skip the already processed rows and restart right where the program crashed.

Getting Comments for Each Top Thread

Once I had the top 50 threads from each country, the next step was to get the comments from each of these threads.

for row in db["threads"]:

thread_id = row["thread_id"]

if row["processed"]:

print(f'Skipping row #{row["id"]} ({thread_id}), Already Processed')

# skip this thread if it has already been processed earlier

continue

# proceed if this thread has not been processed

print(f'Getting Comments for row #{row["id"]} ({thread_id})')

# obtain the comments using the Comment Forest object

thread = reddit.submission(thread_id)

comment_forest = thread.comments

# load all subcomments and flatten the list

comment_forest.replace_more(limit=None)

comment_list = comment_forest.list()

for comment in comment_list:

comment_row = {

"comment_id": comment.id,

"thread_id": thread_id,

"body": comment.body,

"is_submitter": comment.is_submitter,

"parent_id": comment.parent_id,

"mistral_output": "",

}

db["comments"].insert(comment_row)

# create a 'comments' table if it does not exist

# creates columns if they do not exist

# adds the row

# Mark the row as processed

db["threads"].update({"id": row["id"], "processed": True}, ["id"])

print(f"Obtained {len(comment_list)} Comments for {thread_id}")

# Set some delay before calling the API again.

# The number of API calls incurred is

# roughly proportional to number of comments.

time.sleep(3 + len(comment_list) * 0.002)

Note that I did not add a “processed” column in the comments table, but there is a blank “mistral_output” column. In the next step, I filled this column for each comment, so if the column was still blank for a comment, then it wasn’t processed yet.

Sentiment Analysis With Mistral 7B

Though the Mistral 7B is an open LLM and decently fast, it requires a good GPU setup to work efficiently. It was easier to use Mistral AI’s API to run the comments through Mistral 7B. There is also a Python package (mistralai) available to call the API. I first set up a function to pass a given prompt to the API, then called the API with a constructed prompt for each comment. The title of the thread was also included in the prompt for setting context. The code is below:

from mistralai.client import MistralClient

from mistralai.models.chat_completion import ChatMessage

# setup Mistral Client

mistral_client = MistralClient(api_key=config["MISTRAL_API_KEY"])

def ask_mistral7b(prompt):

messages = [

ChatMessage(

role="system",

content='You are a sentiment analyis bot. You are allowed to output only "joke" or "not joke". Keep the answer brief and do not say anything other than "joke" and "not joke".',

),

ChatMessage(

role="user",

content=text,

),

]

chat_response = mistral_client.chat(

model="open-mistral-7b",

messages=messages,

)

return chat_response.choices[0].message.content

for comment in db["comments"]:

print(comment["id"])

if comment["mistral_output"]:

print("DONE, SKIPPING\n")

# skip if the comment already has some output

continue

if comment["parent_id"].startswith("t1_"):

response = "skipped:subcomment"

elif "[" in comment["body"]:

if comment["body"].startswith("Your post was automatically removed"):

response = "skipped:removed/deleted/other"

elif (

comment["body"].startswith("[")

and comment["body"].endswith("]")

and comment["body"].count("]") == 1

):

response = "skipped:removed/deleted/other"

elif "](" in comment["body"]:

response = "skipped:has_link"

# if this comment is to be skipped

if response:

# update skip reason in DB

db["comments"].update(dict(id=comment["id"], mistral_output=response), ["id"])

print(response)

# move to next comment here

continue

thread = db["threads"].find_one(thread_id=comment["thread_id"])

title = thread["title"]

comment_body = comment["body"]

prompt = "The title is:\n" + title

prompt += "\n\nThe comment is:\n" + comment_body

prompt += '\n\nIn the context of the title, if the comment sounds humorous or sarcastic, say "joke", else say "not joke".'

# call Mistral API using above function

response = ask_mistral7b(prompt)

print(response)

# update response in DB

db["comments"].update(dict(id=comment["id"], mistral_output=response), ["id"])

In the above code, the two main things to note are the prompt and the comments that were skipped from the analysis.

I skipped analyzing some comments for the following reasons:

- The comment is not a direct comment on the thread, but a response to one of the comments. In this case, it becomes difficult to place this in the context of the title. The Reddit API marks such comments with a “parent_id” starting with “t1_”.

- The comment was deleted, removed, or taken off for some other reason. This is usually indicated by the comment text starting and ending with a square bracket

- The comment has a link. In this case, there may be additional context that the LLM might not infer. Also, a commenter providing links indicates putting forth a serious point with backing. Jokes usually do not need links.

The system prompt I used was “You are a sentiment analysis bot. You are allowed to output only "joke" or "not joke". Keep the answer brief and do not say anything other than "joke" and "not joke".” Though asked to output only “joke” and “not joke”, the model provided outputs with explanations, such as “not joke. The comment does not seem to be humorous or sarcastic in the given context.” As long as the output included “joke” or “not joke”, I could classify the comment correspondingly. For each comment, I constructed the main prompt as follows:

“The title is:

{thread title here}

The comment is:

{comment here}

In the context of the title, if the comment sounds humorous or sarcastic, say "joke", else say "not joke".”

Aggregating and Visualizing Data With Pandas, Matplotlib and Geoplot

To aggregate and visualize the data, I installed 4 more Python packages:

- pandas: for analyzing and manipulating data in a tabular format.

- geopandas: for interfacing map data with pandas.

- matplotlib: for plotting bar graphs.

- geoplot: for plotting choropleth maps.

Aggregating the Data

To start with, I had to aggregate both the threads data and comments data (including Mistral’s output) at the country level. The key steps involved were:

- Count the number of threads obtained per country. This will be 50, or lesser if the country’s subreddit has been inactive.

- Count the total number of comments obtained per country.

- Identify and count the comments to be used in the analysis, per country. This includes only direct comments that have not been deleted, removed, or redacted. This was labeled as “valid direct comments” and was the denominator to calculate the proportion of jokes.

- Identify and count the comments that were classified as “joke” using the Mistral output, again per country. Essentially, this was done by picking the comments that had the substring “joke” but did not have “not joke” or “not a joke”. Though instructed to output “not joke”, the LLM tended to output a more popularly used (and grammatically correct) variant of the phrase: “not a joke”. This was expected given how LLMs work.

- Calculate average comments per thread for each country

- For each country, calculate the percentage of jokes based on the number of “joke” comments for every 100 valid direct comments.

The code I used is below:

import pandas as pd

import dataset

# connect to the SQLite Database

db = dataset.connect("sqlite:///data.db")

# read country subs CSV and filter out ones with invalid subs

df = pd.read_csv("country-subs.csv", index_col=0).fillna("")

df = df.loc[df["Subreddit"].str.startswith("r/")]

# initialize columns for country level aggregation

df["Thread Count"] = 0

df["Comment Count"] = 0

df["Valid Direct Comments"] = 0

df["Jokes"] = 0

# aggregate thread count

for thread in db["threads"]:

df.loc[thread["country_name"], "Thread Count"] += 1

for comment in db["comments"]:

thread = db["threads"].find_one(thread_id=comment["thread_id"])

# add to aggregate comment count

df.loc[thread["country_name"], "Comment Count"] += 1

mistral_output = comment["mistral_output"].lower()

# if comment was skipped, do not add to valid comment count

# move to next comment

if mistral_output.startswith("skipped:"):

# make an exception for comments with links

if mistral_output != "skipped:has_link":

continue

# if continue wasn't executed, comment is valid

# add to valid direct comments to aggregate

df.loc[thread["country_name"], "Valid Direct Comments"] += 1

# if output has "not joke" or "not a joke"

# do not increment joke aggregate, continue instead

if "not joke" in mistral_output:

continue

if "not a joke" in mistral_output:

print(comment["id"], "not a joke", comment["chatgpt_output"])

continue

# if output lacks "not joke" and "not a joke"

# but has "joke" in it, increment joke aggregate

if "joke" in mistral_output:

df.loc[thread["country_name"], "Jokes"] += 1

# calculate averages and percentages based on aggregated numbers

df["Comments Per Thread"] = round(df["Comment Count"] / df["Thread Count"])

df["Joke Percentage"] = 100 * round(df["Jokes"] / df["Valid Direct Comments"], 2)

# mark countries with less data using arbitrary negative value

df["Joke Percentage"].mask(df["Valid Direct Comments"]<100, -10, inplace=True)

# write results to share on Google Sheet

df.to_csv("final_results.csv")

Two important caveats to note from the above code are:

- Comments skipped for having a link are added to the denominator because they were considered legitimate comments that aren’t likely to be humorous. Comments skipped for other reasons were excluded.

- Countries with less than 100 valid direct comments (denominator) were assigned an arbitrary joke percentage of -10, to numerically represent this in the choropleth map later.

Horizontal Bar Charts Using Matplotlib

To plot bar charts for top commenting countries and top most humorous countries, I used pyplot.barh from matplotlib. As per DRY (Do not Repeat Yourself) principles, I first wrote a common bar plotting function and used it for both charts by varying the dataset. The code for this is below.

import matplotlib.pyplot as plt

# global font settings

plt.rcParams["font.family"] = "Noto Sans"

plt.rcParams["font.size"] = 16

def bar_plot(index_series, value_series, title, filename):

# add a figure to the plotter

# size based on no. of items

if len(index_series)<5:

plt.figure(figsize=(9, 5))

else:

plt.figure(figsize=(9, 12))

# create horizontal bar plot

bar_plot = plt.barh(

index_series,

value_series,

color="#ffc91f",

height=0.5,

)

# format the axes

ax = plt.gca()

ax.get_xaxis().set_visible(False)

ax.spines["top"].set_visible(False)

ax.spines["right"].set_visible(False)

ax.spines["bottom"].set_visible(False)

ax.spines["left"].set_visible(False)

plt.tick_params(left=False)

# add numbers to each bar

plt.bar_label(bar_plot, padding=10)

plt.title(title, loc="left")

plt.savefig(filename, bbox_inches="tight")

# clear the figure for next plot

plt.clf()

Then, I calculated the top commenting countries and most humorous countries and rendered the bar charts to files using the above function:

# first select & plot the uk and ireland countries

df2 = df.loc[["England", "Ireland", "Scotland", "Wales"]].sort_values(

by="Joke Percentage",

ascending=False,

)

bar_plot(

df2.index[::-1],

df2["Joke Percentage"][::-1],

"Percentage of Attempted Jokes: UK and Ireland",

"uk_and_ireland.png",

)

# drop England, Wales & Scotland

# map file does not have their discrete boundaries

df.drop(["England", "Wales", "Scotland"], inplace=True)

# calculate top 20 commenting countries

active_commenters = (

df[["Comments Per Thread"]]

.sort_values(by="Comments Per Thread", ascending=False)

.head(20)

)

# plot bar chart for top commenting countries

bar_plot(

active_commenters.index[::-1],

active_commenters["Comments Per Thread"][::-1],

"Most Comments Per Thread in Top 50 Threads",

"comments_per_thread.png",

)

# calculate top 20 countries with highest joke percentage

top_joke_percents = (

df[["Joke Percentage"]]

.sort_values(by="Joke Percentage", ascending=False)

.head(20)

)

# plot bar chart for top 20 humorous countries

bar_plot(

top_joke_percents.index[::-1],

top_joke_percents["Joke Percentage"][::-1],

"Top 20 Humorous Countries (Joke %)",

"joke_percentage_bar.png",

)

Choropleth Map Using Geopandas and Geoplot

To plot the joke percentages on the world map, I used the map files of World Map’s Official Boundaries from its data catalog. I converted the data from shapefile format to geojson using mapshaper. Then I did some minor metadata editing to map the names in the country-subs.csv file to their respective geometries in the geojson file. With this, I proceeded to prepare the joining of the data using geopandas and then plot the choropleth using geoplot:

import geopandas as gpd

from matplotlib.colors import ListedColormap, BoundaryNorm

from matplotlib.ticker import FuncFormatter

import geoplot.crs as gcrs

import geoplot as gplt

# read map file as geopandas dataframe

map_df = gpd.read_file("world_map.geojson", index_col=0)

# filter out entires without a geometry

map_df = map_df.loc[map_df["geometry"] != None]

# merge map geometry with country-wise aggregate data

merged_df = map_df.merge(

df, # country-wise data produced earlier

left_on=["name"], # map_df column name to match

right_on=["Country Name"], # df column name to match

)

# discrete bins and colors for plotting

# -10 means "Not enough data", i.e. <100 valid comments

# 64% is the highest value in the dataset

bounds = [

-10,

0, 5, 10, 15,

20, 25, 30, 35,

40, 45, 50, 55,

60, 65

]

cmap = ListedColormap([

"#d0d0d0",

"#ccffec", "#adffe1", "#8fffd6", "#70ffcb",

"#52ffbf", "#33ffb4", "#14ffa9", "#00f59b",

"#00d688", "#00b874", "#009961", "#007a4e",

"#005c3a", "#003d27"

])

# function for legend text based on percentage

legend_format = lambda val, pos: str(val)+"%" if val>=0 else "Not Enough Data"

gplt.choropleth(

merged_df,

extent=(-180, -58, 180, 90),

projection=gcrs.PlateCarree(),

hue="Joke Percentage", # column to be used for color coding

norm=BoundaryNorm(bounds, cmap.N),

cmap=cmap,

linewidth=0,

edgecolor="black",

figsize=(16, 9),

legend=True,

legend_kwargs=dict(

spacing="proportional",

location="bottom",

format=FuncFormatter(legend_format),

aspect=40,

shrink=0.75,

),

)

plt.title(

"Percentage of comments in each country's subreddit that are attempted jokes",

loc="left"

)

plt.savefig("jokes_choropleth.png")

In the above code, even though gplt is used for the choropleth, plt is used for setting the title and saving the figure. This is because geoplot builds on top of matplotlib’s pyplot.

Conclusion

In this article, we discussed our findings about the humor quotient of different countries as seen on Reddit and analyzed by an LLM. The United States and Australia topped charts of attempted humor with a score of 64%. We saw that the trends are mostly geographically contiguous, with other variables such as language and script possibly playing a role in the results.

If you looked into the methods used, you learnt about various tools for using APIs, prompt engineering with LLMs, handling large volumes of data, and visualizing geographical data with Python. Most importantly, we hope you learnt how to break down a complex task into simple steps and go about it with different tools involved in each step.

Before you go, check out these related reads: