Finding the best Walmart API gets confusing fast. Walmart has official APIs. Scraping vendors also sell "Walmart APIs" that are really scraping endpoints. Both can be valid, but they solve different problems. In this guide, I compare official options and the best Walmart scraping API options for three common jobs: pulling product details, collecting search results, and running price tracking over time. I also cover how a web scraping API fits when you need flexibility inside my own scripts.

Quick Answer: What is the Best Walmart Scraper?

For the best Walmart API, I pick based on permission and scope. If you're building an approved seller or partner integration, Walmart's official APIs make sense because they are designed for operational workflows and platform access.

If you need broad catalog visibility, search result capture, or custom price tracking, you can reach for a scraping API. In that world, the best Walmart product data API is the one that survives anti-bot friction and returns consistent structure. The shortlist below is the fastest way to narrow it down.

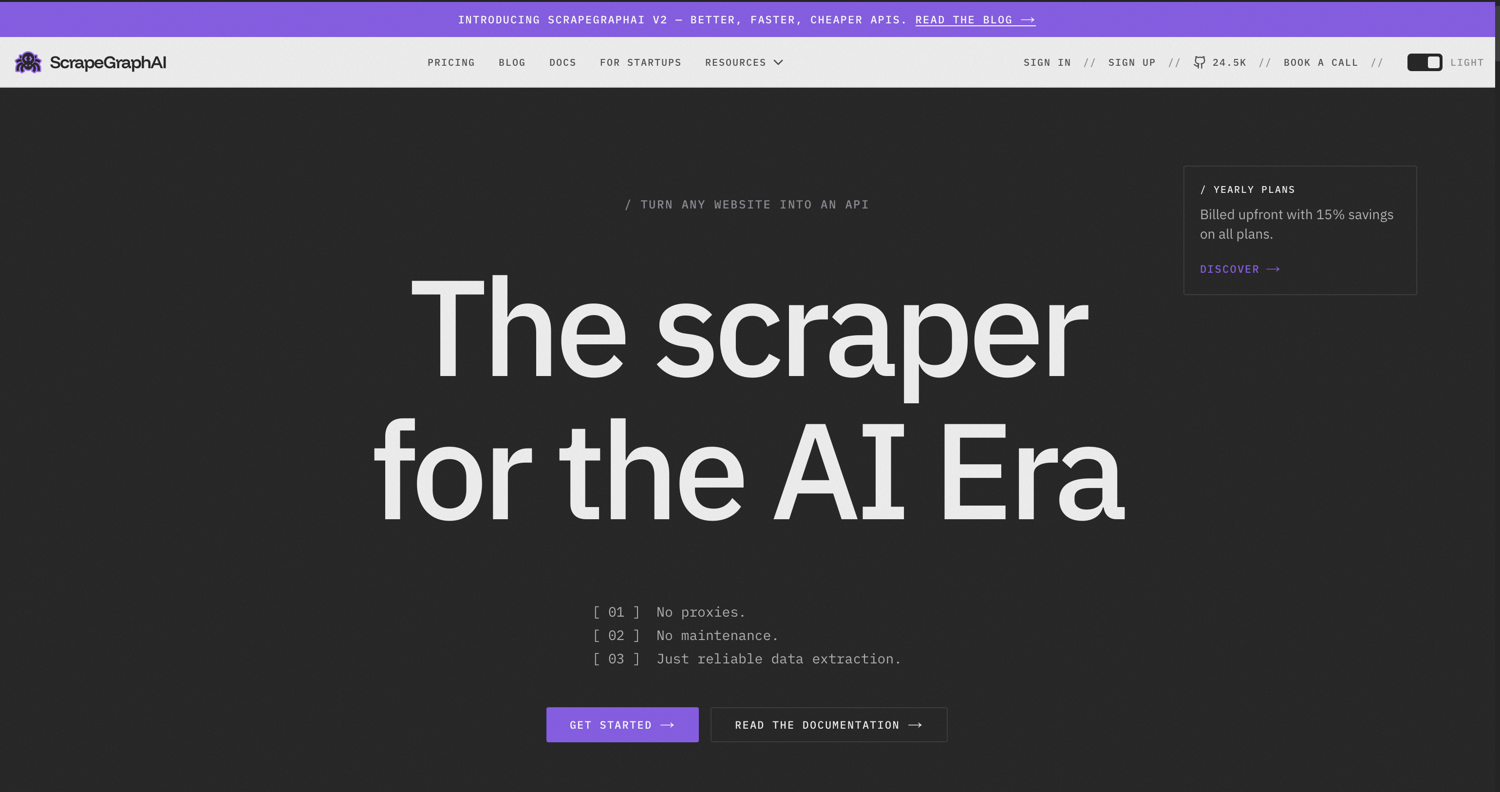

Shortlist

| Tool | One-line summary |

|---|---|

| ScrapingBee | Flexible best Walmart scraping API pattern for developers who want to build their own monitoring scripts with rotating proxies and headless rendering. |

| Oxylabs | Enterprise infrastructure with dedicated Walmart parsers and large proxy networks for structured extraction at scale. |

| ScrapeGraphAI | AI-assisted extraction approach that can reduce selector maintenance when pages change. |

| Apify | Marketplace of Walmart-focused actors that can be run in the cloud and integrated via API. |

| Firecrawl | Crawling and extraction API that focuses on turning site content into structured, LLM-ready outputs. |

| ScrapeStorm | No-code desktop-style scraping option for teams that prefer point-and-click workflows. |

| Octoparse | Popular no-code scraper with templates, but Walmart anti-bot reliability can vary on complex flows. |

| Browse AI | Web scraping and monitoring platform that supports extracting data and monitoring webpage changes. |

| ScrapeHeroCloud | Cloud-based scraping tool with pre-built scrapers and APIs for popular sites, explicitly including Walmart. |

Top Walmart Scraping Tools

1. ScrapingBee

ScrapingBee is a web scraping API that handles headless browsers and rotating proxies. Some Walmart pages rely on JavaScript, so headless rendering matters. Other request patterns trigger blocks, so proxy rotation matters too. That is why using it as a flexible Walmart scraping layer inside a custom monitoring stack makes sense. It is not a native monitoring tool, but it still supports uptime and reliability tracking when custom scripts log success rate, latency, retries, and content diffs.

ScrapingBee has a dedicated Walmart scraping API option to retrieve structured product data. That reduces parsing work when the goal is repeatable fields instead of raw HTML. HTML stays flexible, but parsing stays on me. Structured output is less flexible, but it is faster to consume.

Pros:

- Works well in custom stacks with retry logic, alerting, and storage under my control.

- Headless and proxy handling are abstracted away, so data validation gets the focus.

- Pairs with AI web scraping when selectors get brittle and layout changes happen.

Cons:

- A usage dashboard exists, but monitoring dashboards and alerting for your data pipeline are not bundled.

Pricing:

- ScrapingBee uses a credit-based subscription model with four tiers: Freelance ($49/month), Startup ($99), Business ($249), and Business+ ($599+).

- A free trial offers 1,000 credits with no credit card required.

Picture this: you're monitoring Walmart's catalog for price and stock changes. You scrape product pages on a schedule, extract price and availability, then compare the new data to what you captured before. When a product goes out of stock or a price crosses a threshold you set, you trigger an alert. The risk? If the scraper misreads a price, your alert fires on phantom data instead of real change.

2. Oxylabs

Oxylabs is designed for enterprise-scale operations where infrastructure depth is the primary requirement. It provides a massive proxy network and dedicated eCommerce APIs, including Walmart-specific endpoints that return parsed JSON for product and search pages.

The system is built for stability at volume, making it a fit for teams that prioritize high success rates and throughput over a minimal setup.

Pros:

- Dedicated Walmart parsing reduces the need for custom extraction logic.

- Extensive proxy scale and geographic targeting.

- High reliability for large-scale data collection.

Cons:

- Pricing and onboarding are geared toward larger organizations.

- May be over-engineered for small, one-off projects.

Pricing

- Oxylabs Web Scraper API runs on feature-based, result-based billing starting at $49/month (roughly $1.60 per 1,000 results). Residential proxies start at $6/GB. A free trial with 2,000 results is available.

Ideal use cases are large product catalogs, multi-country price intelligence, or analytics systems where Walmart is just one source among many. In those cases, the best Walmart product data API is the one that keeps running without babysitting.

3. ScrapeGraphAI

ScrapeGraphAI utilizes an AI-assisted approach to describe target data, which helps mitigate the issue of brittle extraction logic. Because Walmart periodically updates its page layouts, AI-based extraction can adapt to DOM shifts. However, it still requires a robust request strategy to manage evolving anti-bot controls.

Pros:

- It can reduce selector maintenance when the DOM shifts, especially for "grab these fields" workflows.

- It fits teams that already work with LLM-style extraction and want faster iteration.

Cons:

- Output consistency can vary if prompts are vague. That matters when I need stable schemas.

- Cost and latency can be higher if every request includes AI inference, depending on how I implement it.

Pricing

- ScrapeGraphAI is credit-based at 10 credits per page. Plans include Free (500 credits/month), Starter ($20), Growth ($100), Pro ($500), and custom Enterprise. Pricing drops as low as ~2.5¢ per page at scale.

4. Apify

Apify uses a marketplace model, allowing users to select from various "actors" tailored to specific Walmart use cases (e.g., search results vs. product details). These actors run in Apify's cloud and can be integrated via API, making it a convenient choice for rapid prototyping.

Pros:

- Large ecosystem of pre-built scripts for different input needs.

- Cloud-native execution removes the need to manage local runtimes.

Cons:

- Reliability varies depending on the maintenance of individual actors.

- Complex workflows may require vetting multiple community-made scripts.

Pricing

- Apify uses pay-as-you-go compute units across four tiers: Free ($5 monthly credits), Starter ($29), Scale ($199), and Business ($999). Enterprise pricing is custom. Actors in the marketplace may charge separate rental fees.

5. Firecrawl

Firecrawl focuses on crawling and extraction workflows. I think of it as a structured crawling layer that helps collect content and return it in formats that are easier to feed into downstream systems.

Pros:

- Strong story around crawling at scale and returning clean outputs for automation.

- Works well when the job is to pull lots of pages and turn the results into the same clean format, especially for non-interactive pages.

Cons:

- May require additional custom parsing for specific eCommerce fields.

- Effectiveness depends on the tool's ability to combat Walmart's anti-bot protection.

Pricing

- Firecrawl runs on credit-based subscriptions: Free (500 credits), Hobby ($16/month), Standard ($83), Growth ($333), and custom Enterprise. One credit per page under standard scraping, with extraction and advanced features consuming more.

I include it in the best Walmart scraping API conversation when the primary job is site crawling and content structuring, not perfect product schema extraction.

6. ScrapeStorm

ScrapeStorm is a visual, no-code scraper. It is designed for users who need a point-and-click interface to build their extraction logic. It is useful for small, repeatable tasks where the structure of the target page is relatively stable.

Pros:

- Faster onboarding for non-developers.

- Works well for small, repeatable pulls where the page structure is stable.

Cons:

- Walmart anti-bot resistance can be the limiting factor, not the UI.

- Desktop-style automation can struggle when the site changes frequently.

Pricing

- ScrapeStorm offers a free Starter plan plus three paid tiers: Professional ($45/month), Premium ($89), and Business ($179). Annual billing drops prices to $39, $79, and $158 respectively. Custom enterprise pricing is available.

7. Octoparse

Octoparse is a well-known point-and-click scraping tool with templates and a wide user base. G2 reviews consistently emphasize usability and no-code access, with notes about learning curve for advanced flows.

Pros:

- Good for quick extraction setups with a visual workflow.

- Helpful for teams that want to iterate without writing code.

Cons:

- Walmart anti-bot challenges can show up as instability in runs.

- Scaling a lot of concurrent scraping can become operational work, even if the UI is friendly.

Pricing

- Octoparse has a free plan plus paid tiers: Standard ($119/month, $89 annually) and Professional ($299/month, $209 annually). Enterprise pricing is custom. Plans differ by task limits, cloud extraction, and concurrent device counts.

I treat it as a best Walmart API option only for small-to-mid tasks where speed and accessibility matter more than deep reliability.

8. Browse AI

Browse AI is more automation-forward in how people use it. I think of it as "watch this page, extract these elements, repeat," with a product posture that fits monitoring workflows more directly than raw developer scraping APIs.

Pros:

- Good for lightweight monitoring tasks and simple schedules.

- Can work well when the target pages are consistent and the extraction rules are simple.

Cons:

- Less developer-native than pure APIs if I need full control over retries, storage, and concurrency.

- Walmart friction can still cause variability, and I may end up needing deeper controls than a monitoring UI offers.

Pricing

- Browse AI uses credit-based plans: a free tier plus Starter (

$48/month), Professional ($87), and Team (~$249), with custom Enterprise pricing. Annual prepayment knocks 20% off, and additional discounts cover nonprofits and startups.

For teams comparing it to developer tools, the intent is different. It can support a best Walmart price tracker workflow with simple monitoring, but it is not the same as a fully custom pipeline.

9. ScrapeHeroCloud

ScrapeHeroCloud operates as a data-as-a-service provider. It offers pre-built scrapers and managed delivery, handling the underlying extraction mechanics so the user receives a finalized dataset.

Pros:

- Good fit when I want managed delivery instead of building scrapers.

- Support and service feel like part of the product.

Cons:

- Less flexible if I need custom fields or experimental collection logic.

- I trade control for convenience, which can matter for bespoke analytics.

Pricing

- ScrapeHero Cloud is credit-based, starting at $5/month for 4,500 credits, with a free tier offering 400 credits. ScrapeHero's separate managed scraping service begins at $199/month per site or $550 on-demand.

If your goal is structured datasets with minimal engineering effort, this can be a practical best Walmart product result API style alternative.

Why Walmart is So Popular?

Walmart is popular because it is massive and it moves fast, that scale shows up in the numbers. They recently reported fiscal 2025 revenue of $681 billion, and it says around 270 million customers and members visit its stores and eCommerce sites each week.

That scale turns Walmart into a living dataset. Prices move. Availability changes by location. Search rankings shift with promotions. Developers chase this data for competitive intelligence, price comparison, assortment analytics, and operational insights. In practice, the best Walmart product data API is often the one that can keep up with that churn without corrupting the dataset with partial failures.

How to Choose the Best Walmart Scraper?

This choice gets easier with a checklist. Here's an example.

Legality and permissions

First, the right lane. Official Walmart Marketplace APIs are intended for sellers and approved partners managing operational workflows like items and inventory. If that is not the situation, a scraping approach is the more realistic path.

Reliability under anti-bot controls

Next comes resilience. Success rate, retry rate, and incomplete responses tell the truth fast. A demo run does not.

Data structure and validation

Then the schema. "Correct" needs a definition. Required fields get enforced, missing fields get logged, and malformed outputs get rejected. That usually matters more than raw request success.

Scalability and concurrency

After that, volume behavior. Some tools look fine at 100 requests, then wobble at 10,000. The only way to know is to ramp gradually and watch failure patterns.

Compliance posture

Compliance is not optional. If personal data is collected or processed, GDPR principles like purpose limitation and data minimization apply. If the workflow touches outreach, CAN-SPAM rules matter too, especially opt-outs and accurate headers. G2 reviews help sanity-check reliability claims and common pain points.

Cost and predictability

Finally, cost modeling. Predictable pricing under retries is easier to operate. The real cost also includes parsing time, reprocessing time, and engineering time.

Use ScrapingBee to Scrape Walmart

I'd use ScrapingBee's API as the fetch layer, then wrap it with my own scheduling and parsing. The technical walkthrough in How to Scrape Walmart provides a blueprint for this implementation. A small list of product URLs goes into a script. Each run requests the pages, parses the response into a strict schema, and stores the fields with a timestamp.

For price monitoring, this setup can function as the best Walmart price tracker when it stays consistent under retries and blocking. Yesterday's stored price gets compared to today's price. Threshold rules decide when an alert triggers. The same pattern works for search pages, as long as extraction stays consistent and the script logs success rate, latency, and missing-field anomalies.

Custom coding is required for scheduling, validation, storage, and alerts. ScrapingBee provides the retrieval layer, while the monitoring workflow lives in the code around it.

Food for Thought

In 2026, I expect the gap between "API" and "scraper" to keep shrinking in marketing language, while getting wider in operational reality. Official APIs will remain gated to approved workflows. Scraping tools will keep leaning into structured outputs and AI-assisted extraction. At the same time, compliance pressure will increase, especially around personal data handling and retention. GDPR remains the baseline framework for data protection principles in many teams, even outside the EU.

I also expect more restrictions at the edge. More dynamic rendering. More bot detection. More rate shaping. That pushes teams toward responsible automation: clear purpose, minimal data collection, and respectful request rates. If I need a flexible building block for structured product extraction, I look at a Walmart scraping API and then I design my pipeline to be conservative and auditable.

Ready to Build Your Walmart Data Pipeline?

A Walmart data pipeline needs boring reliability. Requests should succeed consistently, outputs should validate cleanly, and failures should be visible in logs. ScrapingBee can act as the best Walmart scraping API building block in that setup, since it handles the retrieval layer while monitoring workflows stay in custom code. That keeps scaling straightforward: ramp concurrency gradually, keep parsing strict, and treat success rate, latency, retries, and schema drift as first-class metrics. Start with a small batch, verify outputs against your schema, then expand volume with confidence. Sign up today - get free credits.

Best Walmart Scraper FAQs

Does Walmart have a product API?

Yes, but "product API" depends on context. Walmart has official API programs for approved use cases, including Marketplace APIs that support seller and partner workflows around items, inventory, pricing, and reporting.

What are Walmart API limitations?

Official Walmart APIs are typically designed for operational integrations, not open-ended catalog intelligence. Marketplace APIs focus on seller and partner activities like item management, inventory, orders, and reporting. That means they may not provide broad search result capture or competitor-style monitoring. Even when endpoints exist, access can be gated, rate limited, and tied to account permissions.

What data can be collected from Walmart?

For Walmart pages, teams commonly collect product titles, prices, availability signals, ratings, review counts, seller info, and search result rankings. What you can collect safely depends on source type and compliance. If personal data is involved, I apply GDPR principles like data minimization and purpose limitation. I also avoid collecting more than I need for the intended analytics task.

Do I need to know how to code to scrape Walmart?

Not always. No-code tools can work for small tasks, especially when templates exist and the pages are stable. But if I need a robust pipeline, I usually write code. Coding lets me add validation, retries, change detection, and storage logic. It also helps me respond faster when Walmart changes layouts or anti-bot behavior.

Is it legal to scrape Walmart?

Legality depends on jurisdiction, what data you collect, and how you collect it. I treat this as a compliance question, not just a technical one. I check site terms, avoid abusive request rates, and avoid collecting personal data without a clear lawful basis. GDPR sets strict requirements when personal data is processed. For outreach use cases, CAN-SPAM rules apply to commercial email behavior.

What is the best Walmart price tracker API?

For a best Walmart API choice, I split it into two paths. If I'm eligible for official endpoints that expose price and availability for my integration, that can be simplest. If I need independent tracking across many products and search results, I use a scraping API as the retrieval layer and build my own tracker logic. In that model, stability under anti-bot controls matters more than any single feature.

Jakub is a Senior Content Manager at ScrapingBee, a T-shaped content marketer deeply rooted in the IT and SaaS industry.